Ultra-fast, high-accuracy language detection for real-time voice AI pipelines

Identify spoken language in 2 seconds and route audio to the right ASR, translation, or multilingual voice AI pipeline. Achieving 2× fewer errors than SpeechBrain LangID using 62× less memory.

Only on-device production-ready spoken language detection

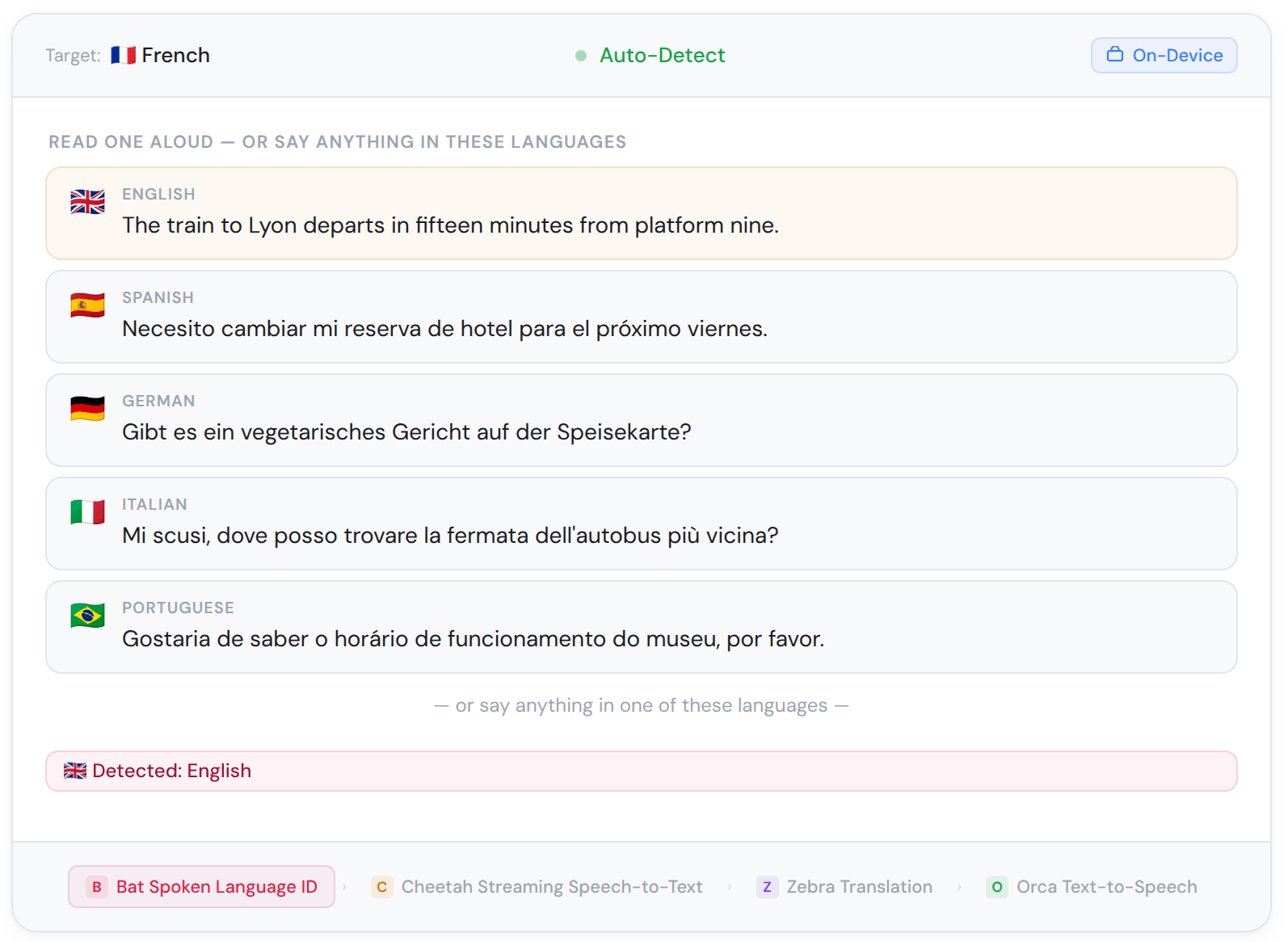

Bat is an enterprise-ready on-device spoken language identification engine built for real-time multilingual voice AI pipelines. It detects the spoken language in live audio streams with high accuracy, runs entirely offline across platforms, and is private by architecture.

Bat, with its 4 MB model size, efficiently identifies the spoken language in real-time audio, using 5 MB of peak memory, and can run on any platform. While Bat Spoken Language Identification is optimized for streaming audio and detects languages in 2 seconds, it supports asynchronous processing too.

Bat Spoken Language Identification's modularity enables several applications, including live translation, multilingual voice AI agents and assistants, speech analysis and archiving by categorizing voice notes, conference recordings, and digital media libraries.

Language detection in a few lines of code

Bat Spoken Language Identification returns the detected language and a confidence score for each inference. Drop it at the start of your audio pipeline to route audio streams to the right STT model, translation engine, or language-specific handler. Use Bat Spoken Language Identification with its native SDKs for Python, C, iOS, Android, and Web.

Spoken Language Identification with proven accuracy and efficiency

Bat Spoken Language Identification achieves 93% accuracy on VoxLingua107 under open-set evaluation: 2× fewer errors than SpeechBrain LID (7% vs. 15% miss rate) while using 62× less memory and 9× less CPU.

Why enterprises choose Bat Spoken Language Identification

Bat is an enterprise-ready on-device spoken language identification engine built for real-time audio streams, high accuracy, and minimal resource footprint. It identifies spoken languages live as audio is captured, runs entirely offline across platforms, and is private by architecture.

Recipes

Speech-to-Speech Translation

Detect the spoken language, transcribe, translate, and synthesize speech in the target language. Full translation pipeline with no cloud dependency.

Ship it.

On device.

Fast, accurate, and lightweight real-time language identification

Common questions about spoken language identification

Spoken language identification is the task of automatically detecting which language is being spoken in an audio stream or file. It is a foundational component in multilingual voice AI systems — used to route audio to the correct language-specific speech recognizer, adapt voice assistants to a user's language in real time, enable automatic transcription of multilingual audio, and support translation pipelines in public safety, healthcare, and customer service applications. Bat Spoken Language Identification processes audio frames on-device and returns a language code and confidence score in real time, making it a practical first stage for any multilingual voice pipeline.

Yes. Bat Spoken Language Identification is the only production-ready on-device engine that does. Amazon Transcribe, Azure, and Google Cloud Speech-to-Text all offer streaming language identification, but all three are cloud-only; audio must leave the device on every inference. Others, like Deepgram and Rev AI, are batch async only. SpeechBrain can process live audio but uses 62x more RAM and 9x more CPU than Bat, offering no production SDK or enterprise support with a committed SLA. Bat Spoken Language Identification identifies spoken languages in real-time audio streams entirely on-device — no cloud round-trip, no audio transmitted to any server, and with intentional open-set handling that returns "unknown" for unsupported languages rather than forcing a match.

Amazon Transcribe supports streaming language identification and requires a minimum of one second of speech before identifying. Like Bat Spoken Language Identification, it can return results for live audio — but it is cloud-only, meaning every audio frame is transmitted to Amazon's servers for processing. Amazon Transcribe requires developers to provide a candidate language list upfront, and does not allow them to combine LID with custom language models or redaction. It selects the closest candidate match when the spoken language is not in your list, rather than returning "unknown." Bat Spoken Language Identification identifies spoken languages on-device with no audio transmitted to the cloud.

Azure Speech offers both at-start and continuous language identification for live audio streams. At the start, language detection takes up to 5 seconds, as acknowledged in Microsoft's own documentation. Continuous language identification is cloud-only, still in preview for on-premise containers. Azure can return a NoMatch or Unknown result based on confidence thresholds and audio conditions. Bat Spoken Language Identification processes audio on-device with no cloud dependency, works across all supported SDKs, and handles unsupported languages through an intentional open-set protocol that was benchmarked and evaluated, not an edge case fallback.

Google Cloud Speech-to-Text supports language identification in streaming audio, but requires a primary language code to be set, as language detection is not fully automatic and performs candidate selection across up to four languages total. It is only available in the global region and US/EU multi-regions, only works with specific models (long, short, and telephony), and is cloud-only with no on-device option. Like other cloud alternatives, Google selects the best match from the provided candidate list and is not designed with open-set handling for unsupported languages. Bat Spoken Language Identification runs entirely on-device, supports any audio source without geographic or model restrictions, and handles unsupported languages deliberately rather than a best-guess candidate match.

In Picovoice's Open-source Spoken Language Identification Benchmark on VoxLingua107 under open-set evaluation, Bat Spoken Language Identification achieves 93% accuracy versus SpeechBrain LID's 85% — more than 2× fewer identification errors (7% vs. 15% miss rate). On efficiency, Bat Spoken Language Identification uses 5 MB peak memory versus SpeechBrain LID's 333 MB (62× less), a 4MB model versus SpeechBrain LID's 85MB (21× smaller), and requires 0.004 core-hours per hour of audio versus SpeechBrain LID's 0.039 (9× less compute). SpeechBrain LID can process live audio, but not everywhere due to its computational requirements. In resource-constrained environments, SpeechBrain LID leaves no headroom for the rest of the voice pipeline.

SpeechBrain LID has no production SDK, no native mobile or embedded deployment support, and no enterprise backing. Bat Spoken Language Identification is also evaluated on genuinely unseen data; SpeechBrain LID is partially tested on its own training dataset, VoxLingua107 - the most popular language identification dataset. Thus, the real-world accuracy figures of SpeechBrain LID may be overstated.

Cloud speech-to-text APIs, like Amazon Transcribe, Azure Speech, and Google Cloud Speech-to-Text, offer language detection as a feature within their transcription pipeline. Developers must pass a parameter when submitting audio, and then STT attempts to detect the language alongside transcription. This approach has three core limitations: it is optimised for transcription rather than the language identification task specifically, it requires audio to be sent to a cloud server, and it relies on candidate language lists rather than open-set detection. Bat Spoken Language Identification is a dedicated standalone engine, running on live audio streams before transcription begins, processes audio entirely on-device with no cloud dependency, and is built specifically for language identification with intentional open-set handling for unsupported languages.

Closed-set evaluation tests a language identification engine only on the languages it was trained to recognize. Open-set evaluation includes audio from languages outside the supported set and requires the engine to return "unknown" for those inputs rather than misclassifying them.

Picovoice Open-source Spoken Language Identification Benchmark uses open-set evaluation: correctly identifying unsupported audio as unknown counts as correct, and misclassifying it as a supported language counts as an error. This reflects production conditions, where users will inevitably speak languages outside the model's training set. Cloud alternatives like Amazon Transcribe and Google Cloud Speech-to-Text select the closest candidate match rather than returning "unknown" — meaning they can silently route audio to the wrong handler when the spoken language is not in the candidate list.

The CPU core-hour ratio measures how many CPU core-hours are required to process one hour of audio. A ratio above 1.0 means the engine cannot keep up with real-time audio on a single core. A ratio below 1.0 means the engine processes faster than real time using less than one full CPU core. In practical terms, the cour ratio determines the difference between a viable pipeline component and a bottleneck.

Measured on AMD Ryzen 9 5900X (12 cores @ 3.70GHz), Bat Spoken Language Identification's ratio is 0.004 vs. SpeechBrain LID's core-hour ratio is 0.039. Bat uses 0.44% of a single CPU core in real time, 9× less than SpeechBrain's 3.9%, leaving the rest of the processing capacity free for downstream tasks like transcription, NLU, and application logic. This 9x difference becomes a critical factor for applications running on resource-constrained environments, such as mobile and embedded, Raspberry Pi.

Yes. Bat Spoken Language Identification requires only 5 MB of peak memory during processing, making it suitable for any deployment environment and applications with tight memory budgets, including embedded systems, low-end mobile devices, Raspberry Pi, and web browsers.

For context, SpeechBrain Language ID requires 333 MB of peak memory — 62× more than Bat, which exceeds the total available memory headroom on many embedded and low-power devices before any other application logic runs. Language identification is typically one component in a larger voice pipeline alongside ASR, NLU, and application logic. Using 5 MB of memory at peak, Bat Spoken Language Identification leaves the rest of your memory budget intact for the pipeline components that need it. Cloud-based language identification APIs have no equivalent embedded deployment option — audio must always be transmitted to remote servers for processing.

Note: Memory availability is not the same as total device RAM. Background services (SSH, networking, logging) consume memory before any application starts. As a practical guideline, a LID engine used in a real-time voice AI application should be treated as part of the total app memory budget, which on mobile typically should not exceed 150–200MB on low-end devices to avoid out-of-memory (OOM) termination risk. Both Android and iOS use low-memory killers that terminate processes when free memory falls below a threshold, and apps consuming more memory are killed first.

Bat Spoken Language Identification returns a language code and confidence score per audio frame. In a typical multilingual voice pipeline, Bat runs first — before transcription — and its output is used to select the appropriate language-specific ASR model, translation engine, or voice handler. For example, a French detection routes audio to Cheetah Streaming Speech-to-Text's French model; Spanish routes to the Spanish model. Combined with Zebra Translate, Bat Spoken Language Identification enables translation applications. Combined with picoLLM, and/or Rhino Speech-to-Intent, Bat Spoken Language Identification allows developers to create multilingual voice AI agents and assistants.

Yes. Bat Spoken Language Identification processes all audio on-device with no network connection required. It operates in remote deployments, and bandwidth-constrained hardware — factory floors, aircraft, underground facilities, and law enforcement field deployments where cloud APIs cannot reach or where data handling requirements prohibit audio transmission to third-party servers.

Bat spoken language identification currently supports English, French, Spanish, Italian, German, Portuguese, Japanese, and Korean.

For languages outside the supported set, Bat Spoken Language Identification returns "unknown" rather than forcing a match, ensuring your pipeline receives a reliable signal rather than a confident wrong answer.

Yes. Audio is processed entirely on-device and never transmitted to any server. Bat Spoken Language Identification is compliant with GDPR, HIPAA, CCPA, CJIS, and other data residency regulations by architecture, not policy. No data processing agreements are required, and there is no risk of audio data breach through Picovoice's systems. Picovoice cannot access end-user audio.

Bat Spoken Language Identification supports embedded, mobile, web, desktop, and server across Linux, macOS, Windows, Android, iOS, and Raspberry Pi. Native SDKs are available for Python, C, iOS, Android, and Web.

Bat Spoken Language Identification can be deployed on-device, on-premise, or in a private or public cloud. The deployment decision and user data are yours, not Picovoice's.

Picovoice docs, blog, Medium posts, and GitHub are great resources to learn about voice AI, Picovoice technology, and how to start building language detection products. Enterprise customers get dedicated support specific to their applications from Picovoice Product & Engineering teams. Reach out to your Picovoice contact or talk to sales to discuss support options.