Low-latency text-to-speech for real-time LLM agents

On-device text-to-speech that starts synthesizing as LLMs generate tokens. No cloud, just 29 MB of peak RAM and 7 MB model size, achieving 130 ms latency, 2.6× faster than ElevenLabs Streaming.

The TTS engine that voice AI agents don't have to wait for

Orca Streaming Text-to-Speech is an enterprise-ready on-device streaming text-to-speech engine built for LLM-powered voice AI applications. It synthesizes speech concurrently as LLMs generate tokens, runs entirely offline across platforms, and is private by architecture.

Unlike Orca, most text-to-speech engines work in a single mode: wait for the full text, then synthesize. That made sense when text inputs were static. It doesn't work when you're building a voice AI agent backed by an LLM that produces output token by token. In many interactions, Orca finishes before competing TTS engines even begin. That's why it achieves 130 ms first-token-to-speech latency, 2.6× faster than the closest competitor, Eleven Labs.

Streaming speech synthesis in a few lines of code

Orca's streaming mode accepts text chunks one at a time and returns audio as soon as there's enough context to synthesize. Use Orca Streaming TTS native SDKs for Python, NodeJS, Android, iOS, .NET, C, and Web to feed LLM tokens directly without using any additional buffering logic.

Lowest latency — proven by a reproducible benchmark

Orca Streaming Text-to-Speech is benchmarked against 15 popular cloud and on-device TTS alternatives using ~200 simulated voice AI agent interactions. Results show Orca is the only TTS engine that responds in less than 200 ms and achieves natural-sounding voices at under 30 MB peak memory.

Why enterprises choose Orca Streaming Text-to-Speech

Orca is an enterprise-ready on-device streaming text-to-speech engine built for low-latency, natural-sounding human-like voice AI interactions with a minimal resource footprint.

Recipes

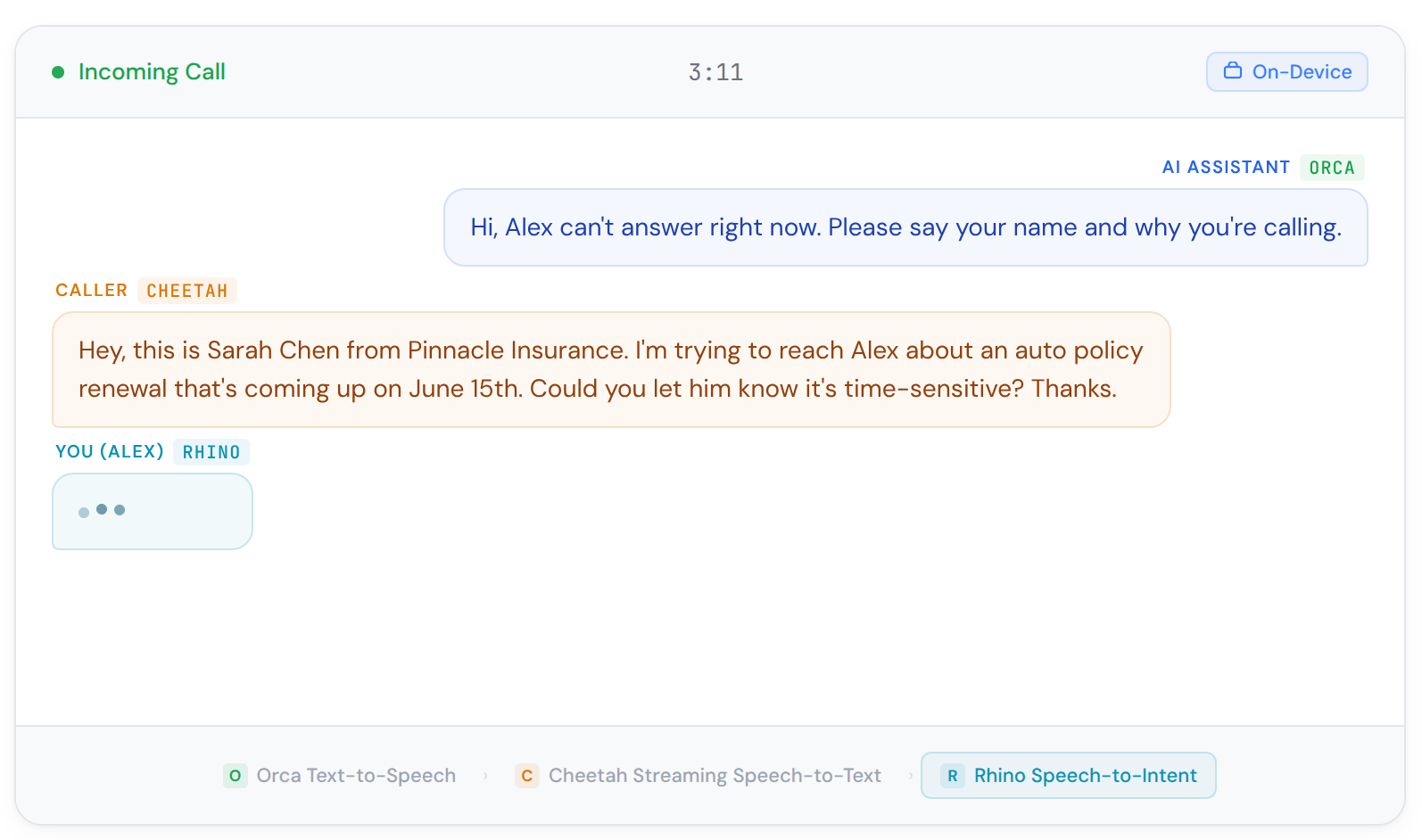

Call Screen

Screen incoming calls with voice activity detection, wake word activation, and text-to-speech responses. Block robocalls and spam without cloud processing.

Ship it.

On device.

Low-latency and lightweight streaming text-to-speech for voice AI agents

Common questions about streaming text-to-speech

Streaming text-to-speech synthesizes audio from partial, incomplete text input — token by token — rather than waiting for the full text before generating speech. This matters for LLM-powered voice applications because LLMs produce output incrementally. A standard TTS solution waits until the LLM finishes before synthesizing; streaming TTS like Orca begins synthesizing as the first tokens arrive and generates audio in parallel with the LLM. The result is dramatically lower latency — Orca achieves 130 ms first-token-to-speech versus 1,400 ms or more for other alternatives.

Streaming text-to-speech is used in LLM-powered voice AI agents and assistants, IVR systems, healthcare applications with hands-free patient communication, e-learning platforms with AI tutors, customer service bots with real-time voice responses, automotive in-cabin assistants, and any application where a voice AI agent needs to respond without perceptible delay.

The term "streaming" or "real-time" Text-to-Speech (TTS) has been used excessively and often inappropriately, leading to confusion about its true meaning and capabilities. Orca Streaming Text-to-Speech, similar to humans continuously processing text input, reads streaming text inputs out loud as they appear.

The current "real-time" TTS solutions can stream audio as it is generated before the full audio file has been created. They were designed for legacy Natural Language Processing (NLP) techniques that generate output all at once. Today's Large Language Models (LLMs) work differently — they produce text incrementally, token by token. Orca Streaming Text-to-Speech, besides streaming audio output like traditional TTS solutions, can process streaming text input that is generated on a token-by-token basis.

Yes. Orca synthesizes audio entirely on-device. No text is sent to a cloud TTS API, no audio is transmitted to any server. It operates in air-gapped environments, remote deployments, and bandwidth-constrained hardware.

Both Orca and ElevenLabs offer streaming TTS, but they differ architecturally. ElevenLabs Streaming TTS processes streaming input by chunking text at punctuation marks and sending those segments to a cloud API via WebSocket. Orca processes raw LLM tokens directly on-device without requiring punctuation boundaries or a network connection.

This architectural difference allows Orca to achieve 130 ms first-token-to-speech versus ElevenLabs Streaming's 340 ms — 2.6× faster. Orca also runs entirely offline, eliminating cloud latency and third-party audio data processing.

You can reproduce the open-source benchmark to measure ElevenLabs Streaming TTS latency.

ElevenLabs TTS (non-streaming) requires the full text input before beginning synthesis. In the open-source TTS benchmark, ElevenLabs TTS achieves 1,470 ms first-token-to-speech, which is 11× slower than Orca's 130 ms. ElevenLabs is a cloud-only TTS solution, so independent efficiency measurements of memory, CPU, and model size are not possible.

You can reproduce the open-source benchmark to measure ElevenLabs TTS latency.

OpenAI TTS is a cloud API that does not support streaming input. It requires the complete text before synthesizing, not well-suited for LLM agents. In the open-source TTS benchmark, OpenAI TTS achieves 2,850 ms first-token-to-speech latency, which is 22× slower than Orca's 130 ms. OpenAI TTS is a cloud-only solution, so independent efficiency measurements of memory, CPU, and model size are not possible.

You can reproduce the open-source benchmark to measure OpenAI TTS latency.

Amazon Polly is the second-best cloud performer after ElevenLabs in the open-source TTS benchmark, with its 1,540 ms latency. Since it's a cloud TTS API that does not support streaming input from LLM token streams, it's 12× slower than Orca, as Orca eliminates the cloud round-trip entirely by running offline and starts synthesizing voice from LLM tokens as soon as they make sense.

You can reproduce the open-source benchmark to measure Amazon Polly TTS latency.

Azure Text-to-Speech is a cloud API that does not support raw LLM token streaming, just like Amazon Polly and OpenAI TTS. Azure TTS requires a network connection, and its compute runs on Microsoft's infrastructure — preventing independent efficiency measurement. For voice AI agent applications requiring low latency, on-device processing, or offline capability, Orca is the more suitable choice. Orca achieves 130 ms FTTS without a network dependency, whereas Azure TTS latency is measured at 1,580 ms in the same open-source TTS benchmark, and is subject to network conditions and does not support concurrent synthesis with LLM token generation.

You can reproduce the open-source benchmark to measure Azure TTS latency.

ESpeak-NG is the second fastest on-device alternative after Orca, with the first-token-to-speech latency of 1,431 ms, which is approximately 11× slower than Orca.

ESpeak TTS checks many boxes: model size, memory usage, and even latency for non-LLM applications. With ESpeak, the primary tradeoff is audio quality: ESpeak TTS produces robotic, synthetic speech, while Orca produces natural, human-like speech. ESpeak TTS is suitable only for highly constrained environments where user experience is not a concern. Otherwise, Orca is better suited for applications prioritizing user experience (audio quality & latency) even for highly constrained environments.

You can reproduce the open-source benchmark to compare Espeak TTS with other alternatives.

Chatterbox TTS Turbo produces high-quality voices, but it is the least efficient TTS in the open-source TTS benchmark by every measure.It does not support streaming audio output — it must fully synthesize all audio before playback begins .That's why Chatterbox needs 44,708 ms (~45 seconds) from first token to speech. Chatterbox TTS also requires 7,500 MB (7.5 GB) peak memory and 19 CPU core-hours to synthesize one hour of audio, and its model size is 2,980 MB. Orca achieves 130 ms first token to speech, almost 350× faster than Chatterbox, using 29 MB peak memory (260× less) with a 7 MB model.

You can reproduce the open-source benchmark to see Chatterbox TTS compute requirements and latency.

Kitten TTS Nano does not support streaming audio output. It must fully synthesize all audio before playback begins , producing significant latency (~11 seconds) for voice AI applications, as shown in the open-source TTS benchmark. Kitten TTS Nano requires 320 MB peak memory, placing it in the mid-range memory group, unsuitable for low-end mobile or embedded deployment. Orca uses 29 MB peak memory, approximately 11× less than Kitten TTS Nano, and achieves 130 ms FTTS, approximately 85× faster than Kitten TTS Nano.

You can reproduce the open-source benchmark to compare Kitten TTS Nano against other alternatives.

Kokoro TTS is an on-device open-source TTS that does not support streaming input. In the open-source TTS benchmark, Kokoro TTS requires over 1 GB peak memory (vs. Orca's 29 MB), placing it in the group unsuitable for mobile and embedded deployment. It cannot synthesize concurrently with LLM token generation, adding the full synthesis delay on top of LLM latency, resulting in 2,920 ms latency (vs. Orca's 130 ms). For LLM-powered voice AI agents, or any application that runs on mobile, Raspberry Pi, or any memory-constrained environment, Orca is much better suited than Kokoro.

You can reproduce the open-source benchmark to compare Kokoro TTS against other alternatives.

Neu TTS Nano (Q4 GGUF), despite using a quantized version, requires over 1 GB peak memory, making it one of the most resource-intensive TTS alternatives. Neu TTS Nano does not support streaming input and starts playing audio with more than a 2,551 ms delay, as shown in the open-source TTS benchmark. For voice AI agents and applications on consumer mobile devices or embedded hardware, Neu TTS Nano is not a deployable option compared to Orca’s 130ms FTTS (first-token-to-speech) with 29MB peak memory and a 7MB model size. Compared to Neu TTS, Orca is operating in a completely different resource class while delivering speech with much lower latency.

You can reproduce the open-source benchmark to see the Neu TTS Nano compute requirements and latency.

Piper TTS is an on-device open-source TTS with over 1 GB peak memory and no streaming input support. It falls in the benchmark's highest memory group, meaning it's not a well-suited fit for low-to-mid-range mobile devices or embedded systems. Orca, with its 29 MB peak memory and ability to process streaming input, achieves 130 ms FTTS. For production voice AI agents, Piper TTS introduces latency and memory constraints that Orca does not, making Orca a better fit than Piper TTS.

You can reproduce the open-source benchmark to compare Piper TTS against other alternatives.

Pocket TTS is an on-device open-source TTS requiring 620 MB peak memory, suitable only for high-end mobile and desktop environments. It does not support streaming input, resulting in 1,670 ms latency, slightly slower than Amazon Polly and Azure TTS but faster than OpenAI TTS, as proven by the open-source TTS benchmark. Pocket TTS is also one of the most efficient TTS options. Its CPU core-hour ratio is 0.38×, still 2.3× more compute than Orca's 0.16×. Since Pocket TTS doesn't support streaming input, as ElevenLabs Streaming and Orca do, it cannot begin synthesizing until the LLM finishes. For voice AI agents where latency and resource efficiency both matter, Orca is the stronger choice across all measured dimensions.

You can reproduce the open-source benchmark to compare Pocket TTS vs other alternatives.

Soprano TTS needs 710 MB RAM to synthesize speech, limiting it to high-end mobile and desktop deployments. Soprano's architecture does not support streaming input, and requires 5.7× CPU core hours, hindering user experience in real-time applications. For any latency-sensitive application or deployment targeting mid-range or lower devices — Android, iOS, Raspberry Pi, web browser — Orca is a better-suited choice as it supports both streaming input and output, and operates within the sub-30 MB memory threshold, requiring 0.16× CPU core hours.

You can reproduce the open-source benchmark to compare Soprano TTS vs other alternatives.

Supertonic TTS 2 requires 520 MB peak memory, making it viable for mid-range and above mobile devices but not embedded or web deployments. It does not support streaming input, resulting in 2,450 ms latency. Orca uses 29 MB peak memory, almost 20× less than Supertonic, and supports concurrent synthesis with LLM token generation, starting playing audio back with a 130 ms delay, almost 20× faster than Supertonic.

You can reproduce the open-source benchmark to compare Supertonic TTS vs other alternatives.

Orca Streaming Text-to-Speech works with all closed-source and free and open large language models, including models from Anthropic, Cohere, Google, Meta, Microsoft, Mistral, OpenAI, and others.

Yes, Orca has the async processing capability, in other words, it can process predefined, i.e., static, text, and convert it into audio streams or audio recordings. Developers can convert pre-defined text into audio as a complete file or by streaming audio incrementally. Please visit our docs for more information.

Orca Streaming Text-to-Speech supports English, French, German, Italian, Japanese, Korean, Portuguese, and Spanish Voice Models. Contact sales with the details of your project if you have an immediate need.

Contact sales to explore our white-glove support services, including customization of Orca Streaming Text-to-Speech for brands that want to represent their "voice" via unique, custom voices.

Orca Streaming Text-to-Speech base model allows developers to adjust the speed of the selected voice. Custom Orca Streaming Text-to-Speech models can be leveraged for further voice tuning. Talk to Sales with your project requirements and get a custom Text-to-Speech model that fits your needs.

Orca Streaming Text-to-Speech can be application, company, domain, or industry-specific with custom vocabulary.

Custom Orca Streaming Text-to-Speech models generate voices with emotions and styles, including joy, anger, whispering, and shouting. Contact sales with your project requirements to get your custom text-to-speech model trained.

Picovoice docs, blog, Medium posts, and GitHub are great resources to learn about voice AI, Picovoice technology, and how to add AI-generated voice to your product. Enterprise customers get dedicated support specific to their applications from Picovoice Product & Engineering teams. Reach out to your Picovoice contact or contact sales to discuss support options.