Blog

How to evaluate voice AI in noisy environments, e.g. call centers, operating rooms, using objective measures, e.g., SNR, to build noise-robust products at scale

How to evaluate voice AI in noisy environments, e.g. call centers, operating rooms, using objective measures, e.g., SNR, to build noise-robust products at scale

Compare call screening options for OEMs and telcos. Strategic guide to call screening and spam filter alternatives to Google by using on-device AI

Build a voice-powered customer survey for web. Collect spoken feedback with on-device speech recognition — no LLM, no cloud APIs needed.

Learn what speech intelligence is, how it differs from speech analytics & how real-time ASR + NLP unlocks automation and actionable insights from spoken data.

Learn why standard quantization fails below 4 bits, how x-bit allocation works, and how GPTQ, GGUF, SpinQuant, and picoLLM compare for on-device LLM deployment.

Build a hands-free voice inspection form for web with voice form filling and voice data entry via spoken language understanding and real-time speech-to-text.

Voice recognition is reshaping banking, healthcare, manufacturing, and more. Explore ten real-world use cases, each with a hands-on on-device voice AI solution

Build smart IVR for contact center automation with Python. Complete customer service voice AI tutorial with intelligent call routing.

Learn how to add voice control to smart TVs with Python. This tutorial walks through on-device voice search using wake word, speech recognition, and local LLM.

Create an on-device restaurant voice agent with Python for hands-free customer ordering, menu inquiries, and order management without cloud dependency.

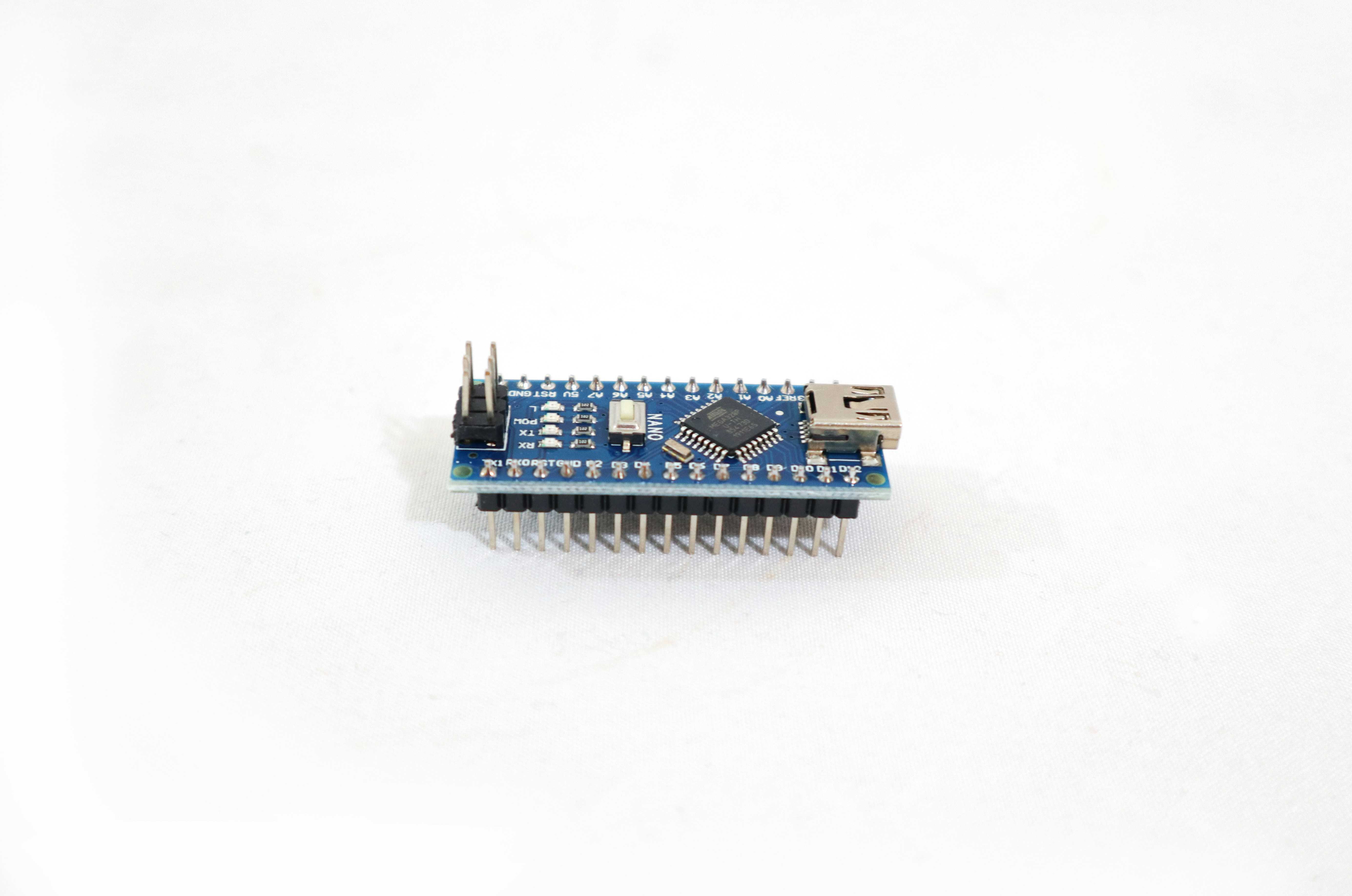

Add voice control to Arduino Nano 33 BLE Sense. Train custom wake word and speech-to-intent model to add voice command processing on the microcontroller.

Build a hands-free smart manufacturing voice agent with Python. Use Local LLMs and on-device voice control to manage equipment and factory data.

Create a fully on-device hotel room voice assistant with Python for private, cloud-free room control, guest services, and smart IoT automation.

Medical Language Models (Medical LLMs or Healthcare LLMs) are AI systems specifically trained on clinical literature, medical records, and healthcare data to understand medical terminology, generate clinical documentation, and assist with diagnostic reasoning.

Real-time transcription converts speech to text instantly with <1 second latency as someone speaks. It processes audio continuously, enabling use cases such as live captions, meeting transcription, voice assistants, and accessibility features across industries.