Text-to-speech (TTS) technology has come a long way, but the Generative AI era is posing new challenges.

Traditional TTS

systems process predefined text in single batches. The rise of sequential text production by LLMs, outputting text in

chunks, demands a more dynamic approach. Modern TTS systems must be able to handle an input stream of text and convert

it to consistent audio in real time. This is essential for enabling conversational AI agents with zero-latency audio

responses.

What Is Streaming TTS?

The terminology surrounding streaming capabilities of TTS systems remains a source of confusion. To clarify, we can

distinguish between three

categories:

- Single Synthesis: The classic model. Complete text input yields a single, complete audio file. This is the basic

feature

of all

TTSproviders. - Output Streaming Synthesis: Complete text is still the input, but audio is produced in chunks. This is simply

referred

to as

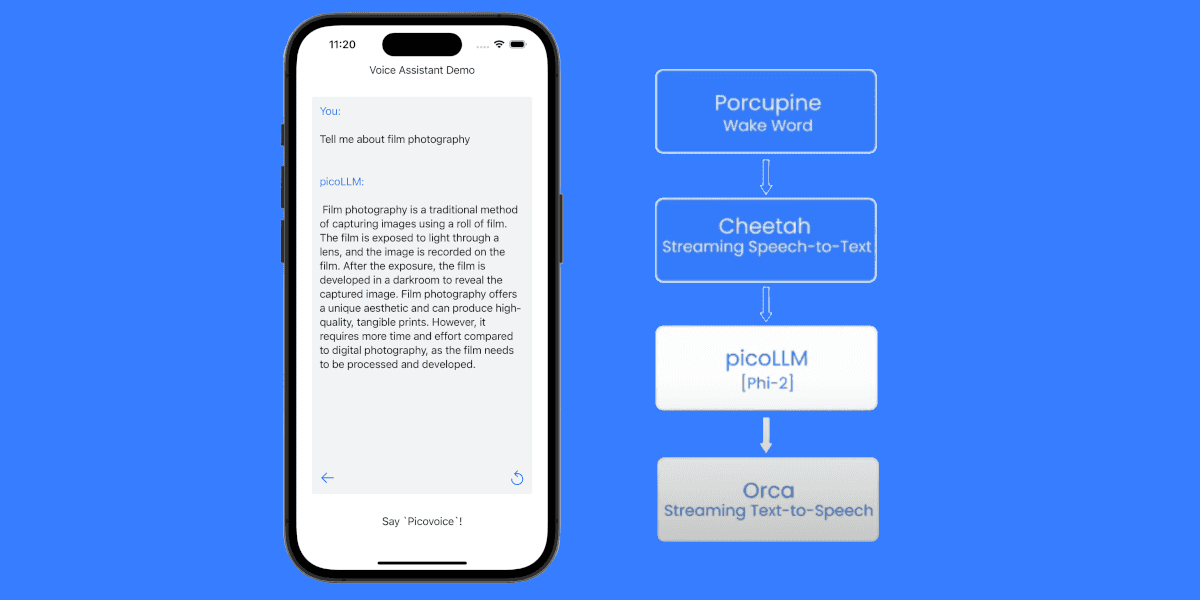

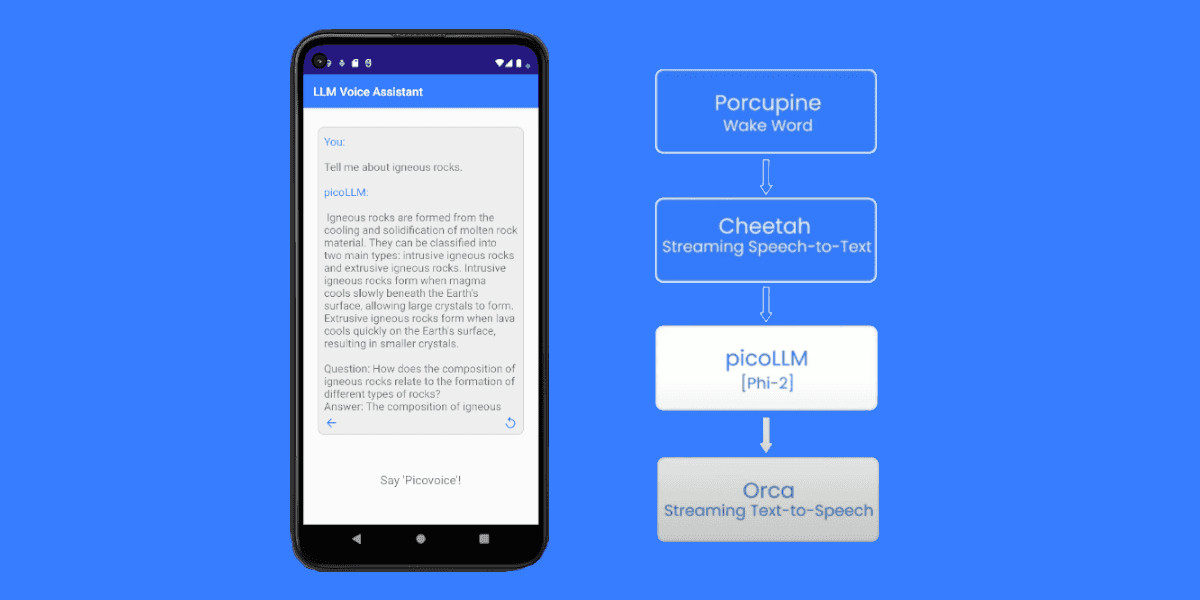

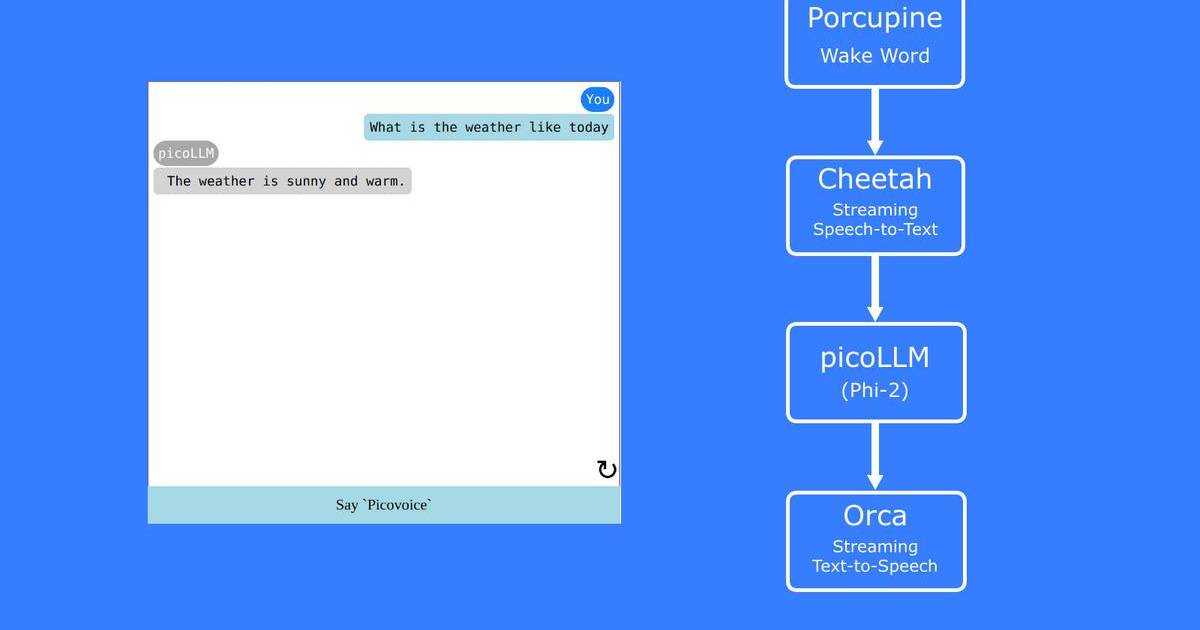

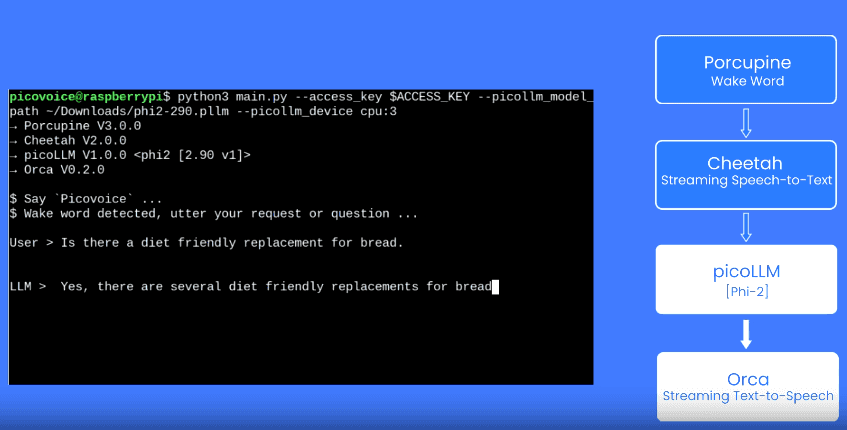

Streaming TTSby manyTTSproviders, such asAmazon Polly,Azure Text-to-Speech,ElevenLabs, andOpenAI. - Dual Streaming Synthesis: This is cutting-edge. Text is fed to the system in chunks (often word-by-word or even character-by-character), and audio chunks are returned as soon as there is enough context to produce natural speech. Orca Streaming Text-to-Speech is the solution we built to achieve this. We also refer to this type of streaming as PADRI engine.

While single synthesis may be sufficient for tasks like content creation, a dual streaming TTS feature is essential

for

real-time conversations with AI voice agents.

In the video below, OpenAI TTS supports Output Streaming Synthesis while

Orca supports Dual Streaming Synthesis.

Why Is Overcoming TTS Latency Hard?

Dual Streaming TTS mimics how humans read aloud a text stream as it unfolds. Modeling this behavior with neural

networks

is challenging for two primary reasons:

- The neural network model needs to be able to synthesize natural speech with very limited context and before knowing

the

end of the sentence. Many of the current

TTSarchitectures, such as plain transformer models or LSTMs, process text bi-directionally and therefore need the complete text to be present before synthesis. - Creating smooth transitions between audio chunks while avoiding audio artifacts is a challenge. Current model architectures often require a wide receptive field, which implies that the audio at time X seconds is influenced by text pronounced only at time X+Y seconds, with Y extending up to several seconds. This poses a difficulty in ensuring both minimal latency until the first audio chunk is produced and smooth transitions for subsequent chunks.

The receptive field of a neural network refers to the length of the input data that influences a single output.

Ever More Challenging Requirements

More and more advanced features are expected for the new generation of voice assistants:

- Fast response time: To ensure minimal latency, the model needs to have fast processing power, in addition to its streaming capabilities.

- Natural: While the naturalness of

TTSin single synthesis has made significant progress, there's still room for improvement to achieve human-like interactions in multi-turn conversations. - Context awareness: An ideal

TTSsystem responds with adequate tone and emotions. OpenAI'sGPT-4oshows promise in this regard with more accurate emotional responses and improved inflections. However, its incompatibility with custom LLMs limits its use cases. - Interruption handling: Smooth conversations require the ability to interrupt the voice agent.

The first two features described are intrinsic to the TTS model, while the remaining two can be enhanced either by

developing systems surrounding the TTS system or by supplying it with additional inputs like emotion labels.

Use cases for the new wave of voice agents include IVR (interactive voice response) systems, call centers, automatic receptionists, automatic ordering systems, and more. For more information, refer to our previous blog post.

See for yourself and start building with Orca Streaming Text-to-Speech.