Voice has been central to how humans interact with each other for centuries. Researchers have been trying to enable a similar interaction with machines for decades. Recently, Large Language Models (LLMs) have been a game changer. They have brought human-like intelligence to machines with accurate and fast responses that emerge right before our eyes.

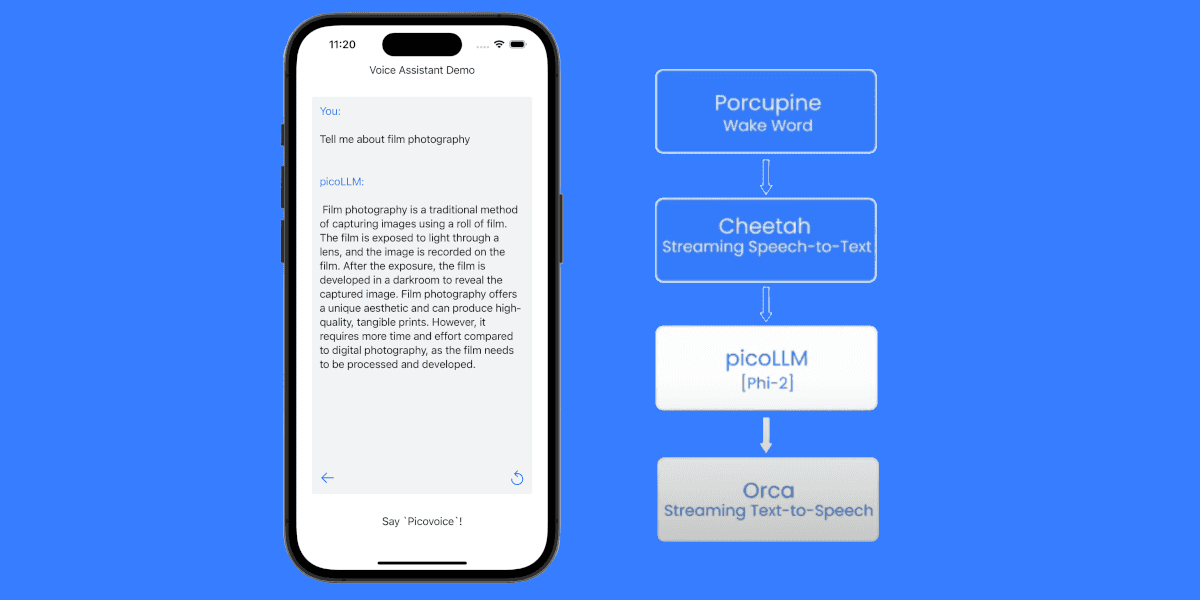

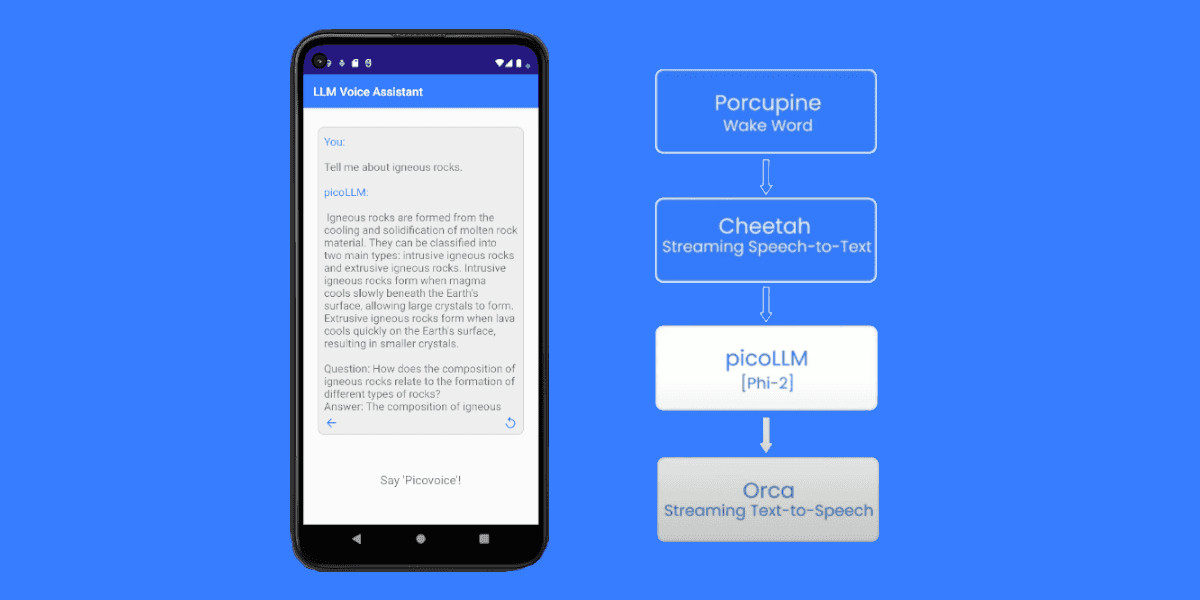

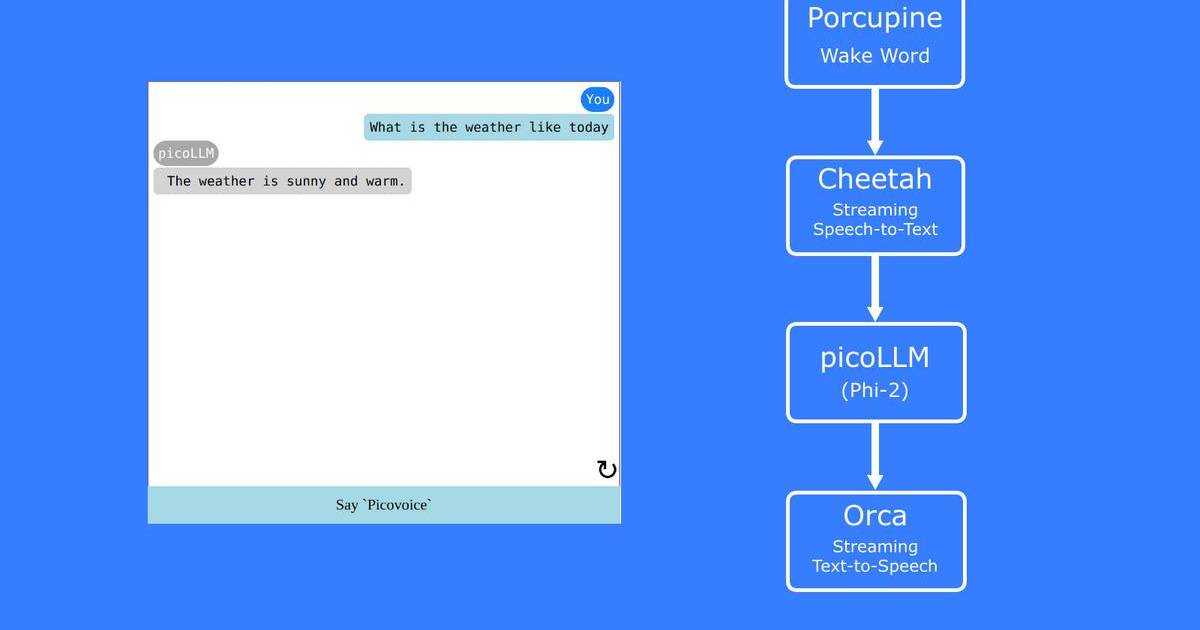

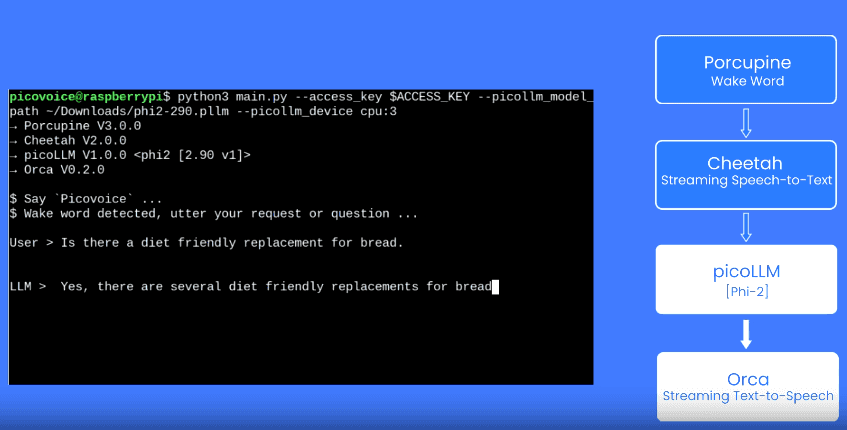

Pairing LLMs with robust voice AI engines can finally mimic human-to-human interaction, enabling dynamic applications like customer service agents or live translations where real-time interaction is key. Wake Word, and on-device streaming Speech-to-Text handle the voice input processing to achieve this goal. However, traditional Text-to-Speech is not developed for streaming use cases, i.e., LLMs in mind. Hence, traditional Text-to-Speech is the weak link to building dynamic applications, leveraging LLMs.

This article unwraps why existing Text-to-Speech solutions are not fit for LLM applications.

Understanding Large Language Models

Large Language Models, trained on vast amounts of data, predict and generate text by understanding the context and semantics of a language. LLMs are not just more accurate or versatile than their predecessor Natural Language Processing. They also differ by responding in real time, making interactions more engaging and dynamic.

Understanding Text-to-Speech

Text-to-Speech (TTS) technology converts written text into spoken words. Behind the scenes, TTS engines process and synthesize speech in chunks to turn them into audio humans can listen to. Processing in chunks requires developers to design and set up their products to feed TTS manageable text segments.

Inherent Delay in Combining LLMs with TTS

Since an LLM generates text in a stream, akin to a person typing out a message while TTS waits for the full text or a segment of text to process before starting the speech synthesis, we encounter a challenge: a delay between the text generation and the spoken output, which may disrupt the flow of real-time interaction.

Finding the Ideal Text-to-Speech for LLM Apps

The ideal TTS for LLMs should be like a skilled narrator, reading the story as it unfolds. It should start reading as soon as the LLM starts providing the output. Therefore, applications can play the audio response while receiving data, reducing perceived latency. To achieve that, TTS should have streaming capability with minimal latency.

- Streaming Capability: A TTS engine with continuous text data processing allows users to receive audio output as the data comes in. Thus, it has a natural advantage over a traditional TTS that processes text in predefined segments.

- Minimal Latency: A TTS engine with on-device processing doesn’t send data to remote servers and eliminates network latency. Thus, it has a natural advantage over cloud-dependent APIs that rely on the other parties -no connectivity issues with the ISP or data center.

Challenges

The term "streaming" or "real-time" Text-to-Speech (TTS) has been used excessively and often inappropriately, leading to confusion about its true meaning and capabilities. Providing “streaming” audio output is different from processing “streaming” text input. TTS engines can achieve the former. However, what’s needed for real-time applications is the latter. At the time of this article, we could not find a “real” streaming Text-to-Speech that can achieve the latter, let alone achieve it locally without network latency. It might baffle many given the advances in LLMs and the number of TTS options in the market. To better understand this issue, let's break it down into two buckets:

First, developing a small and efficient on-device TTS is difficult. The newest TTS engines follow similar paths and focus on improving performance by increasing the model size, making them cloud-dependent. They aim to minimize the network latency through hardware - which doesn’t address the ISP-related issues or uptime.

Second, processing data continuously is difficult. We know this challenge from Speech-to-Text, too. Cloud-dependent APIs can never achieve the speed of on-device Speech-to-Text. On-device Speech-to-Text engines with high accuracy, such as Whisper lack streaming capability. To overcome this challenge, developers use workarounds, such as feeding data in smaller chunks.

Although the common approach is to improve the “streaming” performance by optimizing other variables, we decided to design TTS with streaming use cases in mind.

Orca Text-to-Speech provides developers with two options:

- On-device Text-to-Speech for pre-prepared text: It returns an audio stream in response, using a request-response model. It’s akin to existing solutions but offers privacy, and reliability with zero network latency.

- On-device Text-to-Speech for streaming: It continuously processes text and provides audio output. It is designed and optimized for real-time applications.

Orca Text-to-Speech does not sacrifice the core Picovoice benefits while gaining continuous data processing capability. Like other Picovoice engines, Orca Text-to-Speech is

- small and efficient, running across platforms: embedded, mobile, web, desktop, on-prem, and serverless.

- private-by-design, leaving no room for hesitation in highly regulated industries and enterprise applications.

- easy-to-use, allowing developers to get it up and running in a few lines of code without worrying about how to optimize and feed text segments.