Learn how to run LLMs locally using the picoLLM Inference Engine Python SDK. picoLLM Inference Engine performs LLM inference on-device, keeping your data private (i.e., GDPR and HIPPA compliant by design). picoLLM supports a growing number of open-weight LLMs, including Gemma, Llama, Mistral, Mixtral, and Phi. picoLLM Python SDK runs on Linux, macOS, Windows, and Raspberry Pi, supporting CPU and GPU inference.

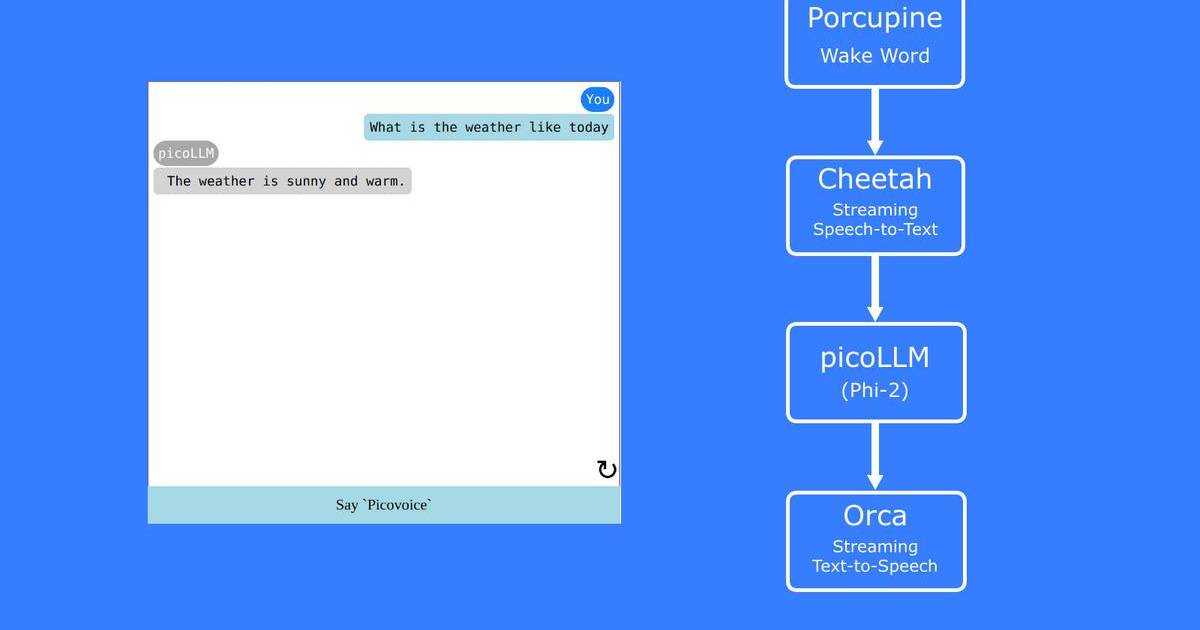

picoLLM Inference Engine also runs on Android, iOS, and modern browsers, including Chrome, Safari, Edge, and Firefox.

Install Local LLM Python SDK

Signup for Picovoice Console.

Log in to (sign up for) Picovoice Console. It is free, and no credit card is required! We need two things here:

Copy your

AccessKeyto the clipboard.On the navbar, click the picoLLM tab, select a model, and download it. For this tutorial, we pick

llama-3-8b-instruct-326, Llama-3-8B-Instruct compressed to 3.26 bits per parameter.

The last three digits in the model name signify the compression bit rate used for the model. The lower the number, the higher the compression ratio. picoLLM Compression is a novel LLM compression technology that facilitates optimal quantization of LLMs across and within their weights.

Implementation

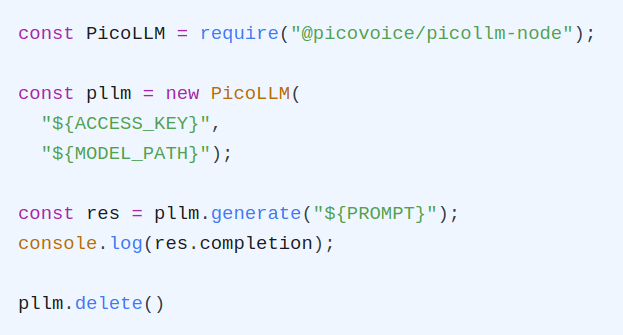

- Import the package:

- Create an instance of the picoLLM engine:

- Prompt the LLM to create a completion:

What's Next?

The picoLLM Python SDK API documentation provides information about all available interfaces and parameters. The picoLLM Python SDK quick start offers step-by-step instructions on how to run LLM completion and LLM chat demos across Linux, macOS, Windows, and Raspberry Pi. The demo source code is also available on the picoLLM Inference Engine GitHub repository.