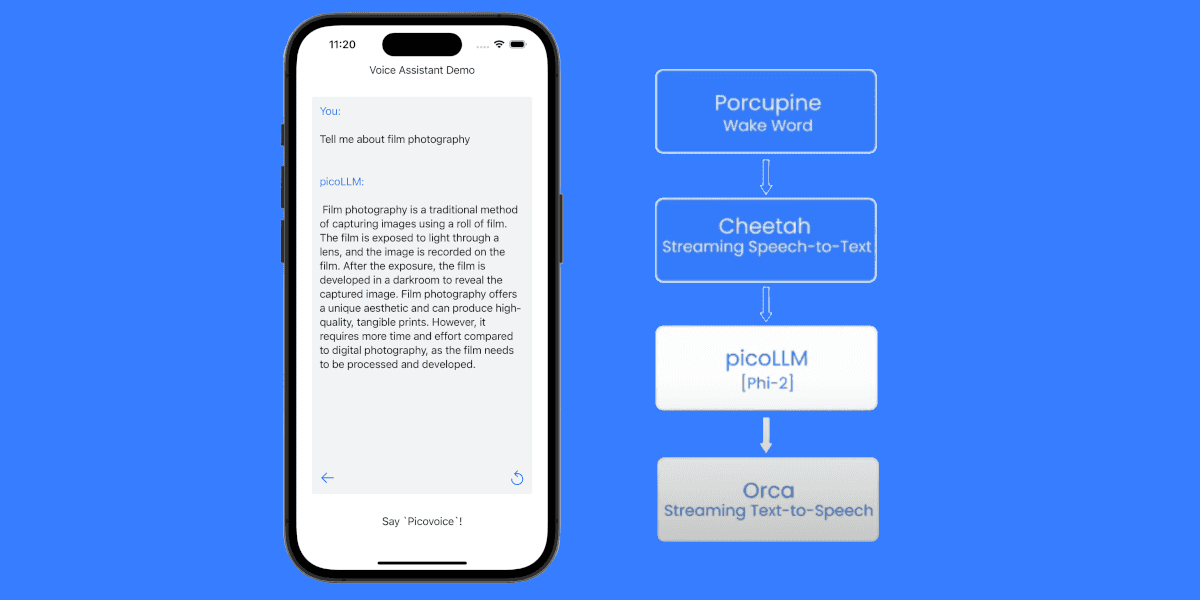

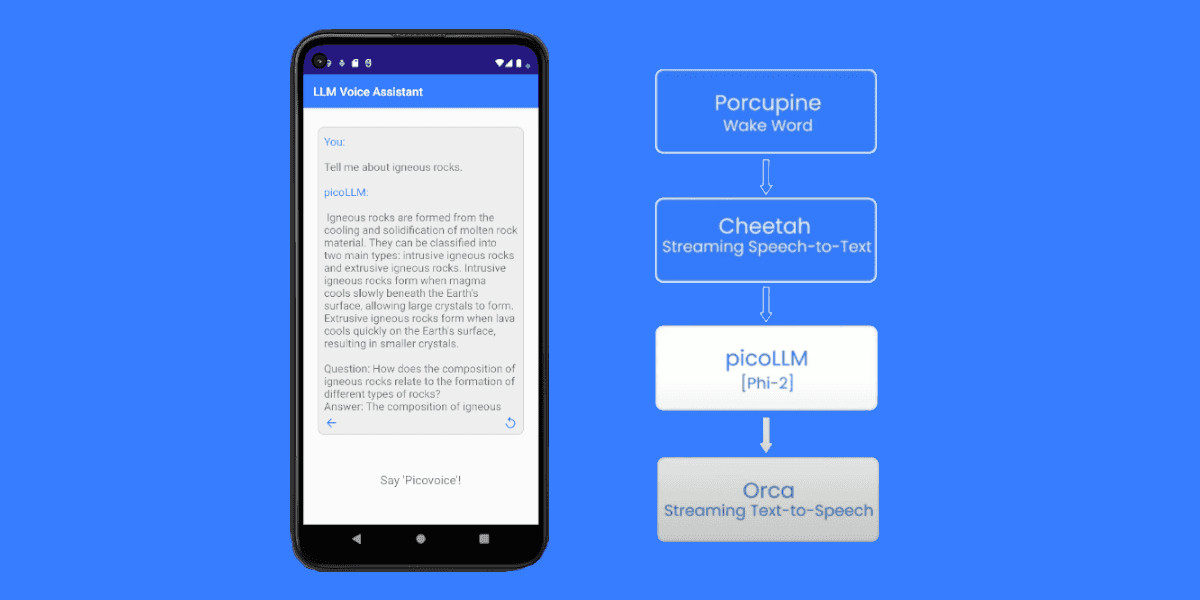

Large Language Models (LLMs), such as Llama 2 and Llama 3, represent significant advancements in technology, improving how AI understands and generates human-like text with increased accuracy and context sensitivity. Therefore, these models are useful for creating voice assistance, chatbots, and natural language processing tasks.

Most of these use cases are particularly helpful when running on handheld devices since the device is always next to us. However, unlike desktop applications that can take advantage of powerful CPUs and GPUs, mobile phones have hardware limitations preventing fast and accurate responses. Server-side solutions may also provide issues as network connectivity is not reliable and privacy is a concern regarding personal information leaving the handheld device.

Luckily, Picovoice's picoLLM Inference engine makes it easy to perform offline LLM inference. picoLLM Inference is a lightweight inference engine that operates locally, ensuring privacy compliance with GDPR and HIPAA regulations, and usability where network connection is a concern. Llama models compressed by picoLLM Compression are small enough that they are able to run on most iOS devices.

picoLLM Inference also runs on Android, Linux, Windows, macOS, Raspberry Pi, and Web Browsers. If you want to run Llama across platforms, check out other tutorials: Llama on Android, Llama for Desktop Apps and Llama within Web Browsers.

In just a few lines of code, you can start performing LLM inference using the picoLLM Inference iOS SDK. Let’s get started!

Before Running Llama on iOS

Prerequisites

The following tools are required before setting up an iOS app:

Install picoLLM Packages

Create a project and install picoLLM-iOS using CocoaPods. Add the following to your app’s Podfile:

Replace ${PROJECT_TARGET} with your app’s target name. Then run the following:

Sign up for Picovoice Console

Next, create a Picovoice Console account, and copy your AccessKey from the main dashboard. Creating an account is free, and no credit card is required!

Downloading the picoLLM compressed Llama 2 or 3 Model File

Download any of the Llama 2 or Llama 3 picoLLM model files (.pllm) from the picoLLM page on Picovoice Console.

Model files are also available for other open weight models such as Gemma, Mistral, Mixtral and Phi 2.

The model needs to be transferred to the device, there are several ways to do this depending on the application use case. For testing, it is best to host the model file externally for download or copy the model file directly into the phone using AirDrop or USB.

Building a Simple iOS Application

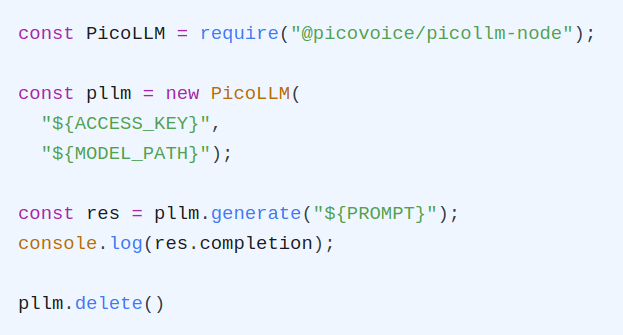

Create an instance of picoLLM with your AccessKey and model file (.pllm):

Pass in your prompt to the generate function. You may also use streamCallback to provide a function that handles response tokens as soon as they are available:

There are many configuration options in addition to streamCallback. For the full list of available options, check out the picoLLM Inference API docs.

For a complete working project, take a look at the picoLLM Completion iOS Demo or the picoLLM Chat iOS Demo. You can also view the picoLLM Inference iOS API docs for complete details on the iOS SDK.