As large language models become prevalent and there uses become more varied, the importance of selecting the right model becomes increasingly important.

Every tech giant is releasing their own generative AI model these days: Google has Gemini, Meta has Llama3, Microsoft's has Copilot, and then of course, OpenAI kicked off the GenAI movement with their ChatGPT.

However, most of these platforms are cloud-based. While cloud-based LLMs such as these have changed the landscape, it is important to note their drawbacks.

Data privacy, latency, and the recurring costs associated with API usage are just a few of the potential issues that could arise from API based services.

A New Option: Local LLMs

Local LLMs, in contrast to cloud-based LLMs, run directly on user devices.

This allows for an LLM engine that inherently addresses many of concerns with privacy, latency, and cost.

It's not that easy, local LLMs can still consume considerable computational resources and

require expertise in both model optimization and deployment.

In addition, the model compression required to run LLMs locally can reduce the accuracy when comparing to cloud-based LLMs.

Llama.cpp is an example of a framework that has attempted to address these issues.

However, while it has found success among hobbyist communities,

it has a few substantial drawbacks that have excluded it from adoption by most professional developers and enterprises.

With the current LLM landscape in mind, picoLLM aims to reconcile the issues of both local and cloud-based LLMs using novel x-bit LLM quantization and cross-platform local LLM inference engine. Using hyper-compressed versions of various open source models,

developers are able to deploy these models on nearly any consumer device, using a variety of programming languages and frameworks.

The picoLLM Inference Engine is a cross-platform library that supports Windows, macOS, Linux, Raspberry Pi, Android, iOS and Web Browsers. picoLLM has SDKs for Python, Node.js, Android, iOS, and JavaScript.

The following tutorial will walk you through all the steps required to run a local LLM on nearly any macOS device using Node.js.

Setup

Before we begin, you'll need the following:

- Your Picovoice Access Key: Obtain your key from the Picovoice Console.

- A picoLLM model: Download the model file (we recommend the

llama 3 8bmodel with a3.18bit-rate) from the Picovoice Console. You will need the absolute path to this downloaded file. Nodeandnpminstalled on your machine: You can follow the official instructions.

Start off by creating a project directory and initializing a Node.js project:

Add the @picovoice/picollm-node npm package to the projects package.json:

Writing a Simple LLM Script in Node.js

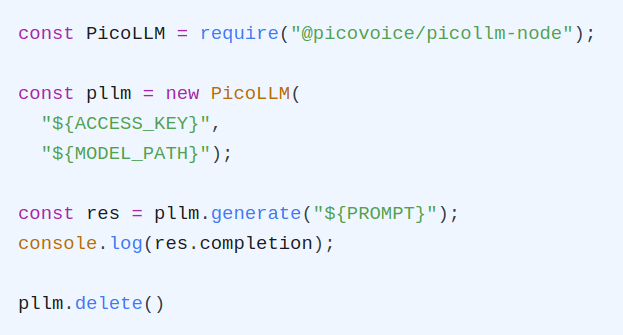

Create a file called index.js. Inside index.js, import the the package and create an instance of the engine:

Replace ${ACCESS_KEY} with yours obtained from Picovoice Console and ${MODEL_PATH} with the path to a model file also downloaded from Picovoice Console.

Be sure to call .release() to cleanup up picoLLM at the end.

Now lets generate our response, let's go with the prompt "Robots are". I've also added a completionTokenLimit of 128 to the options parameter, so they the LLM doesn't generate too long a response. Don't forget to print the final completion!

Run the script using

After a few seconds, you should see the response printed to terminal.

The engine generates the output tokens one at a time, however currently the example will wait until the engine finishes generating all the tokens then prints them all at once.

This creates a poor user experience.

Fortunately we can rectify this by using a callback.

The callback gets called every time a new token is generated,

so we can then print them in real time.

Let's update our example but this time pass in the streamCallback option:

Run the example again using node index.js, now you'll see the output printed out in real time as the engine generates it!

Note that I had the change from console.log() to process.stdout.write(). This is so that we don't print a newline after every token, which would awkwardly split up the response from the LLM.

Once we're done with the engine, we need to explicitly delete it:

All together our example would look like this:

To see additional optional parameters for generation and a selection of chat dialog templates for different models, check out the API documentation.

Prefer Python?

picoLLM and macOS supports a lot more than Node.js. For example, if you wanted to do the same thing but in Python instead, the code would look like this:

The Python API documentation details additional options for generation as well as chat templates that can be used to have back-and-forth chats with certain models.

Additional Resources

Both Node.js and Python have two demos available: completion and chat. The completion demo accepts a prompt and a set of optional parameters and generates a single completion. It can run all models, whether instruction-tuned or not. The chat demo can run instruction-tuned (chat) models. The chat demo enables a back-and-forth conversation with the LLM, similar to ChatGPT.

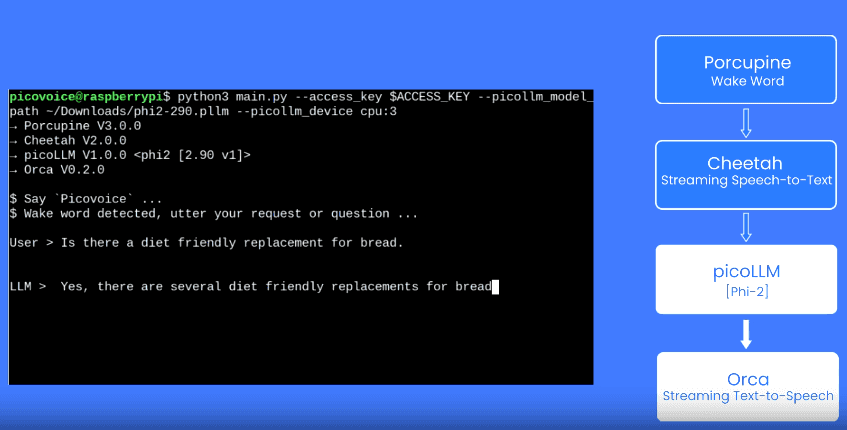

Want to use the LLM in a voice assistant? Check out how to make an on-device LLM voice assistant. You can even try it on your iOS device!