It's an exciting time for large language models (LLMs). The recent advances are astounding, with models like GPT-4o and Llama3 demonstrating high proficiency in natural language understanding and generation, making the Turing test a quaint thing of the past. However, for developers using Node.js, there are still limited options available for integrating LLMs into Node applications. Cloud services like the OpenAI API offer access to powerful models but come with online service dependencies, privacy concerns and unbounded usage costs. For projects that want to avoid external cloud providers, packages like node-llama-cpp provide tools for running models on local hardware, but comes with convoluted setup requirements, significant size vs. accuracy trade-offs, and minimal platform support.

picoLLM aims to address all the issues of its online and offline LLM predecessors with its novel x-bit LLM quantization and cross-platform local LLM inference engine. Offering hyper-compressed versions of Llama3, Gemini, Phi-2, Mixtral, and Mistral, picoLLM enables developers to deploy these popular open-weight models on nearly any consumer device. Now, let's see what it takes to run a local LLM using Node.js!

The picoLLM Inference Engine is a cross-platform library that supports Windows, macOS, Linux, Raspberry Pi, Android, iOS and Web Browsers. picoLLM has SDKs for Python, Node.js, Android, iOS, and JavaScript.

The following guide will walk you through the steps required to run a local LLM using Node.js.

Setup

Install Node.js.

Make a new directory and init a new Node project in it:

- Add the

@picovoice/picollm-nodenpm package to the project:

- Go to Picovoice Console, retrieve your

AccessKey, and choose apicoLLMmodel file (.pllm) to download.

Running an LLM Inference with Node.js

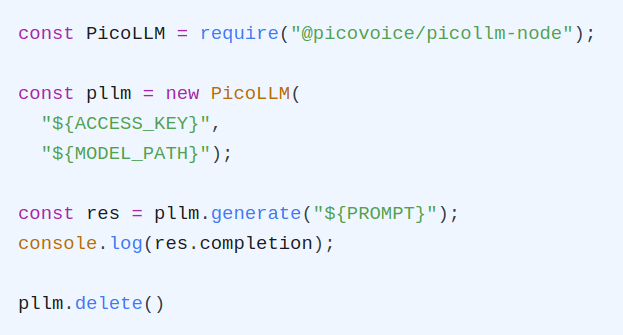

To get started we'll need to create a new Javascript file, index.js. Open it up in the editor of your choice,

import the picollm package and initialize the inference engine using your AccessKey and the path to your

downloaded .pllm model file.

With the inference engine initialized, we can now use it to generate a basic prompt completion and print it to the console:

It will take a few seconds to generate the full completion. IF we want to stream the results, we can use the streamCallback

and output to process.stdout in order to not write a new console line each print:

When done, be sure to release the resources explicitly:

The Node.js API documentation details additional options for generation as well as chat templates that can be used to have back-and-forth chats with certain models.

Node.js LLM Demos

The picoLLM GitHub Repository contains two demos to get you started: completion and chat. The completion demo accepts a prompt and a set of optional parameters and generates a single completion. It can run all models, whether instruction-tuned or not. The chat demo can run instruction-tuned (chat) models. The chat demo enables a back-and-forth conversation with the LLM, similar to ChatGPT.

To try out either of the demos, you can simply install the picoLLM demo package:

This will install command-line utilities for running the demos. To run the chat demo: