AI model quantization without the accuracy loss.

A novel AI model compression and quantization algorithm that makes sub-4-bit compression with no or minimal accuracy loss possible, even when GPTQ, GGUF, or SpinQuant fails. No ML team required.

X-bit quantization that maintains accuracy where GPTQ, GGUF, and SpinQuant collapse for any Transformer model

Most AI model quantization methods rely on uniform bit allocation: every weight gets the same precision, regardless of its impact on model output. GPTQ, GGUF, AWQ, and even rotation-based methods like SpinQuant share this constraint. At 4-bit precision, this is acceptable. Below 4 bits, it collapses. Some weights matter more than others.

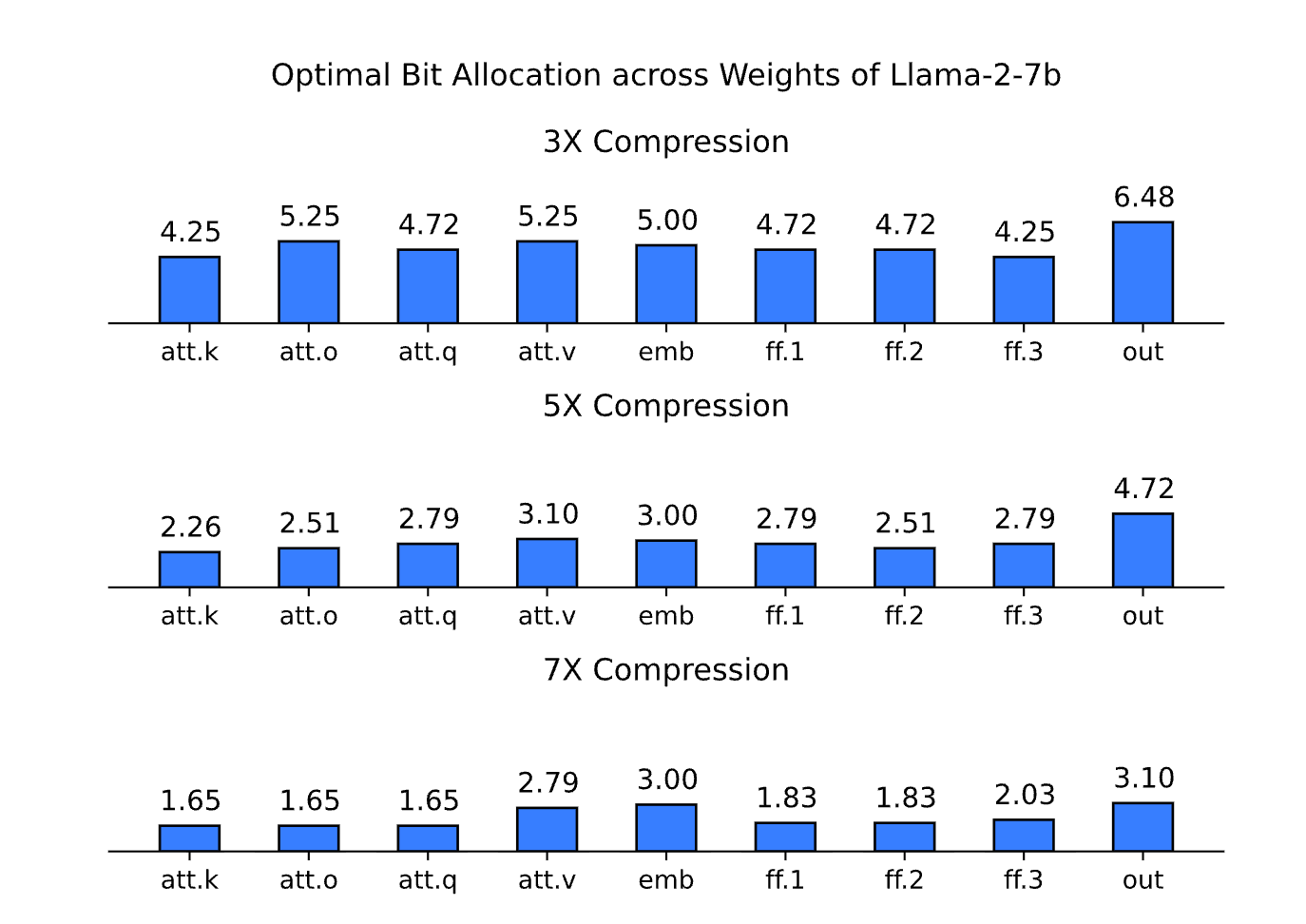

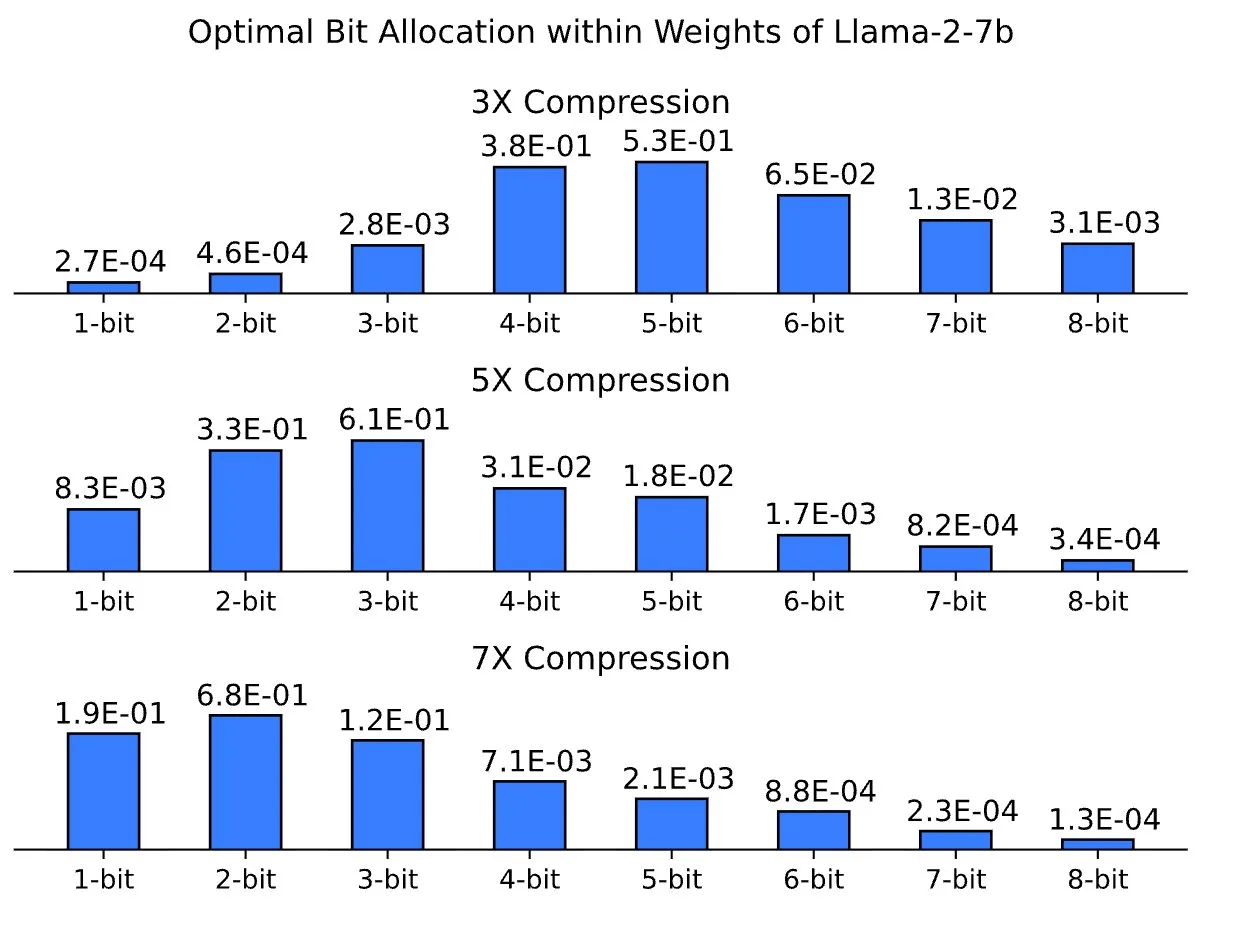

picoCompression takes a different approach. Given a target size and a task-specific cost function, the algorithm learns the optimal bit allocation both across model components (inter-functional allocation) and within each weight (intra-functional allocation). The result is a compressed model that consistently outperforms fixed-precision quantization at the same final size.

What makes picoCompression different from other AI model quantization algorithms

The number of weights in a modern language model is in the hundreds, and the number of columns within each weight is in the thousands. Treating all of them with the same bit precision wastes capacity on unimportant components and starves the salient ones.

picoCompression uses gradient descent to learn the bit budget at both levels. Across components, it allocates more bits to functions that contribute most to task accuracy. Within each weight, it allocates more bits to columns whose quantization error has the largest impact on the output. The bit distribution is not chosen by hand. It emerges from the data.

Why enterprises choose picoCompression

picoCompression is the AI model compression algorithm chosen by enterprises that can't accept the accuracy collapse of fixed-precision methods at sub-4-bit. By learning bit allocation across and within weights, it delivers the smallest models with the highest accuracy on any target platform and integrates without a dedicated ML team.

Quantize custom models. Deploy them on CPU, GPU, mobile, embedded, and web.

Open-weight language and vision models are ready-to-use or test. Custom model compression available through enterprise engagements with NDA-protected model handling.

X-bit quantization automatically assigns a different number of bits to each weight in a model based on its importance to model outputs, rather than applying a uniform bit depth across all parameters. This produces a model with a fractional average bit rate, e.g., 2.56 bits, that cannot be matched by any standard fixed-depth format. picoCompression uses X-bit quantization and achieves near-float16 accuracy at sub-4-bit levels even when uniform methods like GPTQ result in catastrophic accuracy losses.

Bit depth refers to a fixed, uniform number of bits assigned to every weight in a model — for example, exactly 8 bits per parameter in LLM.int8() quantization . Bit rate refers to the average bits per weight when allocation varies across parameters. X-bit quantization methods like picoCompressionassign different bit depths to different weights based on their importance, achieving a target bit rate — such as 2.56 bits — that no standard fixed depth can match. This distinction is the core reason X-bit quantized models require purpose-built inference engines.

Sub-4-bit LLM quantization compresses large language model weights to fewer than 4 bits per parameter, reducing memory requirements enough to enable deployment on laptops, phones, and browsers. Below 4 bits, uniform allocation methods cannot maintain accuracy: 4-bit precision gives only 16 representable values, 3-bit gives 8, 2-bit gives 4. Sub-4-bit compression therefore requires non-uniform bit allocation, assigning more bits to high-importance weights and fewer to less critical ones, to avoid significant accuracy loss.

GPTQ is a quantization algorithm that minimizes quantization error layer by layer, adjusting remaining weights sequentially to compensate for errors introduced at each step. It requires calibration data to estimate input feature statistics. GGUF is a file format, not an algorithm, that packages model weights and metadata into a single binary with built-in support for quantization levels from Q2 to Q8 using block-wise quantization, applying individual scale factors to blocks of weights to handle outliers. The two are not direct alternatives: GPTQ produces quantized weights that can be stored in various formats, while GGUF defines how quantized models are packaged and distributed.

GPTQ uses sequential layer-by-layer quantization with fixed bit depth. GGUF is a file format with block-wise quantization. SpinQuant uses learned Hadamard rotations before quantization to eliminate outliers and is integrated into Meta's ExecuTorch. All three use uniform bit allocation. picoCompression learns the bit allocation as part of the quantization process, both across model components and within each weight. On Llama-3-8b MMLU, picoCompression maintains 94.5%, 99.9%, and 100% of float16 baseline accuracy vs. GPTQ maintains 38.7%, 83.1% and 99% of float16 baseline accuracy at 2-bit, 3-bit, and 4-bit settings, respectively.

Standard inference engines are built around fixed bit depths, mainly for 4 and 8 bits. Bit rates — for example, 2.56 bits per weight on average — breaks compatibility with existing frameworks. Building an inference engine for X-bit quantized models requires implementing SIMD operations for every bit depth from 1 to 8 across multiple instruction set architectures. For x86 alone, supporting five SIMD variants across eight bit depths requires 80+ specialized functions, with additional separate implementations needed for CUDA, Metal, WebGPU, and mobile platforms. That's why picoLLM Inference Engine implements all of these.

It depends on target hardware, required compression ratio, and accuracy tolerance. For 4-bit deployment with broad community support, GGUF is the most practical choice given its 125,000+ available models on Hugging Face. For full end-to-end 4-bit quantization, including activations and KV cache,SpinQuant maintains near-float16 accuracy where GPTQ collapses. For sub-4-bit deployment, where memory constraints require 2-bit or 3-bit compression,picoCompression's X-bit allocation maintains near-float16 MMLU scores across model families where GPTQ drops to near-random performance.

picoCompression supports any transformer-based language and vision model. The algorithm itself is architecture-agnostic and applies to any neural network with quantizable weights.