Low-latency, highly accurate real-time transcription SDK

Build on-device voice AI agents, dictation apps, and conversational interfaces that run even on embedded. Customize with domain vocabulary for higher accuracy.

Streaming ASR, built for apps that can't wait or be wrong

Cheetah is an enterprise-ready on-device streaming speech-to-text engine built for guaranteed low latency, domain-specific accuracy, and cross-platform production deployment. It transcribes speech in real time as it is spoken, runs entirely offline across platforms, and is private by architecture.

Cloud-based real-time transcription introduces network latency that makes response time unpredictable, introduces data privacy exposure, and unbounded costs at scale. On-device processing eliminates inherent cloud limitations. However, most local engines struggle to match cloud accuracy without sacrificing latency or compute efficiency.

Cheetah is the only on-device real-time transcription engine that matches cloud WER (beats Google in every benchmark, and Azure in some benchmarks) even before it's customized for the use case, and requires less compute than any other local engine tested. No tradeoff on accuracy, latency, or privacy, and no minimum hardware requirements.

Custom real-time transcription in under 3 lines

A single API handles audio streaming, endpoint detection, and transcript emission. Partial transcripts arrive word by word as they're uttered. Use Cheetah Streaming STT with its native SDKs for Python, NodeJS, Android, iOS, Java, .NET, React, Flutter, React Native, C, and Web.

Proven accuracy vs. Amazon, Azure, and Google Streaming STTs — across languages

Cloud transcription APIs have one advantage over on-device alternatives: accuracy. Cheetah Streaming Speech-to-Text closes that gap despite its minimal size. Cheetah beats Google Streaming STT across six languages and both Azure and Google in French, Spanish, and Italian.

Why enterprises choose Cheetah Streaming Speech-to-Text

Cheetah is an enterprise-ready on-device streaming speech-to-text engine built for guaranteed low latency, domain-specific accuracy, and cross-platform production deployment. It transcribes in real time, processes audio data entirely offline, supports custom vocabulary and keyword boosting, and is private by design.

Recipes

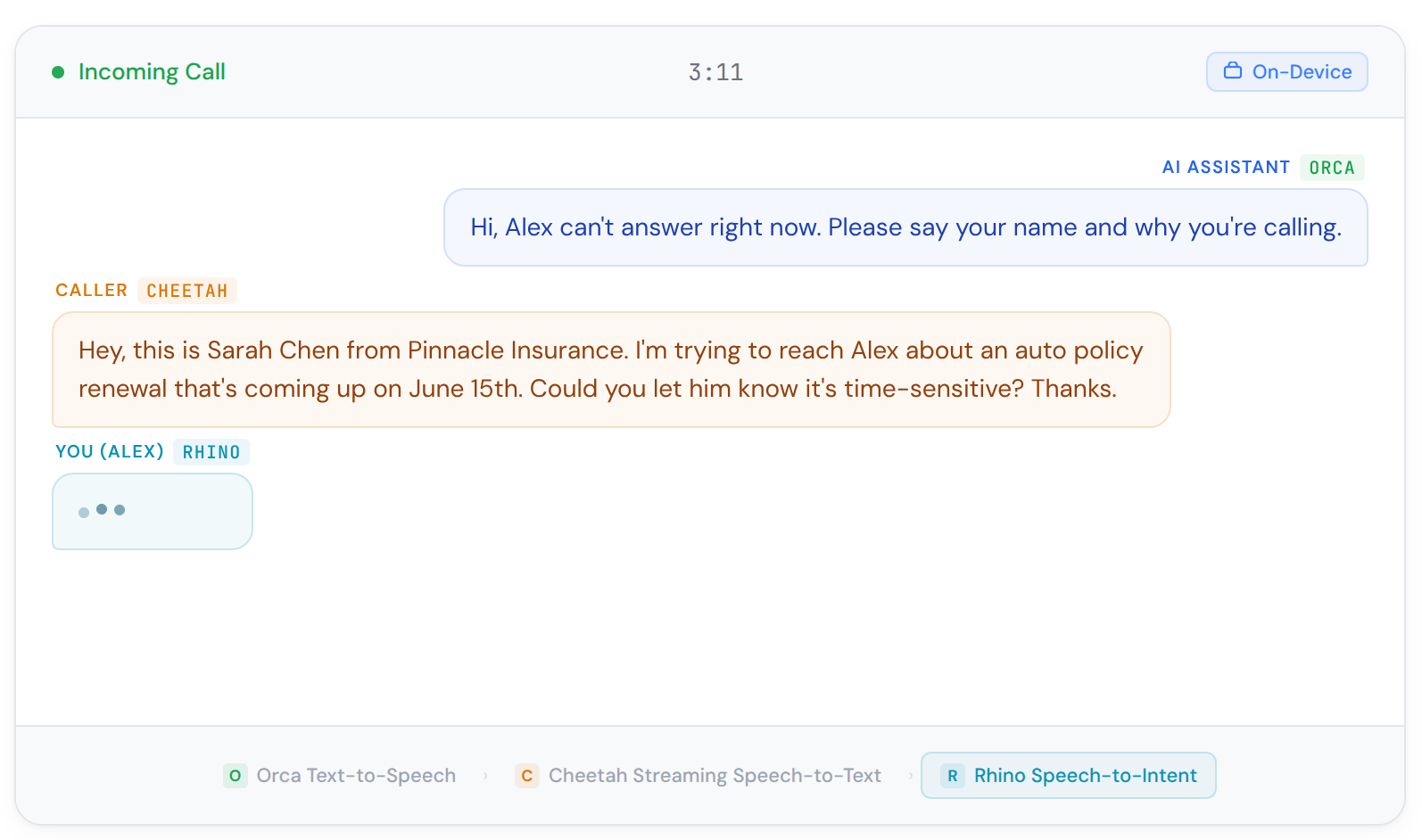

Call Screen

Screen incoming calls with voice activity detection, wake word activation, and text-to-speech responses. Block robocalls and spam without cloud processing.

Ship it.

On device.

Fast, accurate, and lightweight real-time transcription

Common questions about real-time transcription

Real-time transcription engine or streaming speech-to-text engine transcribes audio in real time as speech is spoken, emitting partial and final transcripts word by word rather than waiting for a complete utterance or audio file. Unlike batch transcription — which processes complete recordings after the fact — streaming ASR operates on continuous audio streams, making it suitable for live captioning, voice assistants, call analytics, and any application where immediate transcript feedback matters.

Streaming Speech-to-Text is used in real-time captioning and meeting transcription, voice assistants and conversational AI agents, IVR and call centre analytics, healthcare documentation with hands-free clinical workflows, legal transcription, e-learning platforms, and any application where transcripts need to be available as speech is happening rather than after the fact.

Cloud-based real-time transcription APIs record and send voice data to vendor servers where the transcription engine resides to convert voice into text. On-device real-time transcription brings the transcription engine where voice data is, offering guaranteed real-time experience by eliminating unpredictable delays.

Yes. You can run Cheetah Streaming Speech-to-Text in the cloud, whether private, public, or hybrid. Picovoice on-device voice recognition technology allows enterprises to decide where to run the transcription engine instead of making the Picovoice cloud mandatory for voice processing.

The key metrics for real-time transcription are

- word error rate (WER), which measures word accuracy;

- punctuation error rate (PER), which measures how accurately punctuation is placed;

- word emission latency, which measures the delay between speech and transcript output;

- core-hour efficiency, which measures compute cost per hour of audio;

- model size, which determines deployability on constrained hardware.

Cheetah is the only engine that leads all five categories among on-device streaming alternatives, as proven by the open-source real-time transcription benchmark.

Word error rate is the ratio of transcription errors to the total number of words spoken — the standard metric for measuring speech-to-text accuracy. A WER of 10% means 10 words in every 100 were transcribed incorrectly. Lower WER means better accuracy. However, there are nuances in comparing WER figures. WER is a generic method and treats all errors equally; test data sets affect the WER scores. Picovoice's open-source real-time transcription benchmark measures Cheetah's WER against Amazon Transcribe, Azure, and Google across English, French, German, Spanish, Italian, and Portuguese using publicly available datasets, making results reproducible and independently verifiable.

Punctuation error rate (PER) measures how accurately a transcription engine places punctuation — periods and question marks — relative to a reference transcript. PER matters for applications where transcripts are read by humans or processed downstream: meeting notes, call summaries, legal transcription, and closed captions all depend on accurate punctuation to be usable. Cheetah achieves 16.1% PER in English — the best result of any engine in the benchmark, ahead of Amazon (24.4%), Azure (16.4%), and Google (36.0%).

Word emission latency is the average delay from when a word finishes being spoken to when an ASR engine emits it in a transcript. It is the key responsiveness metric for streaming ASR — lower means better synchronisation between speech and text output. Cheetah achieves 590 ms word emission latency in English — the lowest of any on-device engine tested, and faster than Amazon (920 ms) and Google (830 ms).

In the open-source real-time transcription benchmark, Amazon Transcribe Streaming leads on WER in English and all non-English languages tested, whereas Cheetah Streaming STT beats Amazon Transcribe Streaming on word emission latency (590 ms vs 920 ms) and punctuation accuracy in English (16.1% vs. 24.4% PER). Cheetah Streaming STT's advantage over Amazon Transcribe Streaming is architectural: Cheetah Streaming STT runs entirely on-device, eliminating network latency. For applications where privacy, offline capability, or predictable cost at scale are requirements, Cheetah is the stronger choice regardless of Amazon's WER advantage.

You can reproduce the open-source benchmark to measure Amazon Transcribe Streaming WER, PER, and latency figures to compare to Cheetah Streaming STT.

Cheetah outperforms Azure Real-Time Speech-to-Text on WER in French (13.6% vs 15.8%), Spanish (8.6% vs 9.4%), and Italian (14.3% vs 18.5%) whereas Azure Real-time STT beats Cheetah in English (8.2% vs. 10.1%), German (10% vs. 11.9%) and Portuguese (9.7% vs. 12.3%) in the open-source real-time transcription benchmark. Where Cheetah wins decisively over Azure Real-time STT is offline capability. For applications where privacy, offline capability, or predictable cost at scale are requirements, Cheetah is the stronger choice over Azure Real-time STT.

You can reproduce the open-source benchmark to measure Azure Real-time STT WER, PER, and latency figures to compare to Cheetah Streaming STT.

As proven in the open-source real-time transcription benchmark, Cheetah outperforms Google Streaming Speech-to-Text on WER across all six languages in the benchmark — English (10.1% vs 11.9%), French (13.6% vs 18.5%), German (11.9% vs 16.1%), Spanish (8.6% vs 11.6%), Italian (14.3% vs 18.0%), and Portuguese (12.3% vs 12.8%). The punctuation accuracy gap is even wider — Cheetah's 16.1% PER versus Google's 36.0% in English, and 20.3% versus Google's 48.5% in Spanish. Cheetah also runs entirely on-device, replacing Google's per-minute API cost and cloud dependency with offline processing.

You can reproduce the open-source benchmark to measure Google STT Streaming WER, PER, and latency figures to compare to Cheetah Streaming STT.

OpenAI Whisper does not support real-time streaming. It processes audio in 30-second segments and cannot emit partial transcripts as speech occurs, making it unsuitable for live captioning, voice assistants, or any application requiring immediate feedback. Cheetah is purpose-built for streaming, delivers lower word emission latency than any Whisper variant tested, and at 34MB is significantly smaller than any Whisper model worth deploying for accuracy-sensitive applications.

OpenAI Whisper does not support real-time streaming, but there are streaming ASRs, such as Whisper.cpp Streaming derived from Whisper. As proven in the open-source real-time transcription benchmark, Whisper.cpp Streaming sits in the middle of the benchmark across every dimension — not accurate enough to lead, not fast enough to compete on latency, not small enough to justify the accuracy tradeoff. In English, Whisper.cpp Streaming Base achieves 19.8% WER versus Cheetah's 10.1%, with a 1,240 ms word emission latency versus Cheetah's 590 ms, and a 139MB model versus Cheetah's 34MB. Whisper.cpp Streaming Tiny reduces the model size to 73MB and latency to 1,240 ms, but WER worsens to 22.4%. Neither variant offers a meaningful advantage over Cheetah on any single metric.

You can reproduce the open-source benchmark to measure Whisper.cpp Streaming WER, PER, and latency figures to compare to Cheetah Streaming STT.

As proven in the open-source real-time transcription benchmark, Moonshine Streaming achieves competitive word accuracy, e.g., Moonshine Medium reaches 10.6% WER in English, close to Cheetah's 10.1% — but at an enormous compute and storage cost. Moonshine Medium's 3.36x core-hour ratio versus Cheetah's 0.08x depicts a 40× efficiency gap. Furthermore, Moonshine Medium ships a 290MB model versus Cheetah's 34MB. Moonshine Tiny reduces resource requirements, but WER degrades to 23.9%. For applications where accuracy matters and compute budget or OTA delivery constraints exist, Moonshine is not a viable production option. Cheetah delivers better accuracy at a fraction of the resource cost.

You can reproduce the open-source benchmark to measure Moonshine Streaming WER, PER, and latency figures to compare to Cheetah Streaming STT.

As proven in the open-source real-time transcription benchmark, Vosk Streaming Large achieves 11.5% WER in English — comparable to Cheetah's 10.1% — but with a 2,000 ms word emission latency, more than 3× slower than Cheetah's 590 ms, and a 2,733MB model that rules it out for any mobile, embedded, or OTA deployment. Vosk Streaming Small reduces the model to 66MB and compute to a reasonable 0.11x core-hour ratio, but WER degrades to 18.4%, and word emission latency remains at 920 ms. Vosk offers no configuration where it simultaneously matches Cheetah Streaming Speech-to-Text on accuracy, latency, model size, and compute efficiency. For production applications where any of these dimensions matter, Cheetah is the stronger choice.

You can reproduce the open-source benchmark to measure Vosk Streaming WER, PER, and latency figures to compare to Cheetah Streaming STT.

Yes. Cheetah Streaming Speech-to-Text is trained on diverse audio conditions including background noise, multiple speakers, and various accents. For specialized environments or specific accent patterns, contact sales for custom training options.

Cheetah Streaming Speech-to-Text runs entirely within your infrastructure, eliminating external dependencies that could cause outages. You can deploy across multiple instances, regions, or availability zones using standard load balancing and failover strategies.

Yes, you can train custom speech-to-text models on Picovoice Console to optimize Cheetah Streaming Speech-to-Text for specific industries, terminologies, or use cases. This includes medical terminology, legal language, technical jargon, or company-specific vocabulary.

Cheetah Streaming Speech-to-Text can be configured to handle multiple languages through separate instances or language-specific models. Contact sales to determine whether a multilingual model or a spoken language identification is a better fit for your use case.

Cheetah Streaming Speech-to-Text currently supports English, French, German, Italian, Portuguese, and Spanish.

Contact sales to tell us about your commercial endeavor and ask for speech-to-text language support.

Picovoice docs, blog, Medium posts, and GitHub are great resources to learn about voice AI, Picovoice technology, and how to start building transcription products. Enterprise customers get dedicated support specific to their applications from Picovoice Product & Engineering teams. Reach out to your Picovoice contact or talk to sales to discuss support options.