TLDR: Build a smart IVR system for call center automation in Python. This tutorial shows how to implement low-latency conversational IVR with intent recognition, intelligent call routing, and LLM reasoning for AI-powered customer service automation.

Why Smart IVR Systems Matter for Contact Center Automation

A smart IVR (Interactive Voice Response) uses voice AI to understand speech, route calls intelligently, and resolve customer requests without rigid menu trees or keypad inputs. Unlike traditional IVR systems that rely on fixed flows, smart IVRs combine speech recognition, intent detection, and AI-driven reasoning to handle requests dynamically and reduce friction in customer interactions.

In live customer service calls, even small delays compound quickly and degrade the overall experience. Callers navigate numbered options, repeat information multiple times, and wait through network round-trips that add 1–2 seconds of latency per interaction. Cloud-based voice APIs compound this latency. For example, Amazon’s cloud STT and TTS add substantial processing time: automatic speech recognition takes 920 ms with Amazon Transcribe Streaming, and speech synthesis adds 1540 ms with Amazon Polly. Additionally, text-based intent classification adds an extra transcription step compared to direct speech-to-intent pipelines, increasing end-to-end latency in conversational IVR.

Running speech recognition, intent detection, and language model reasoning locally within the IVR application server eliminates cloud speech API round-trips and delivers faster, more predictable response times.

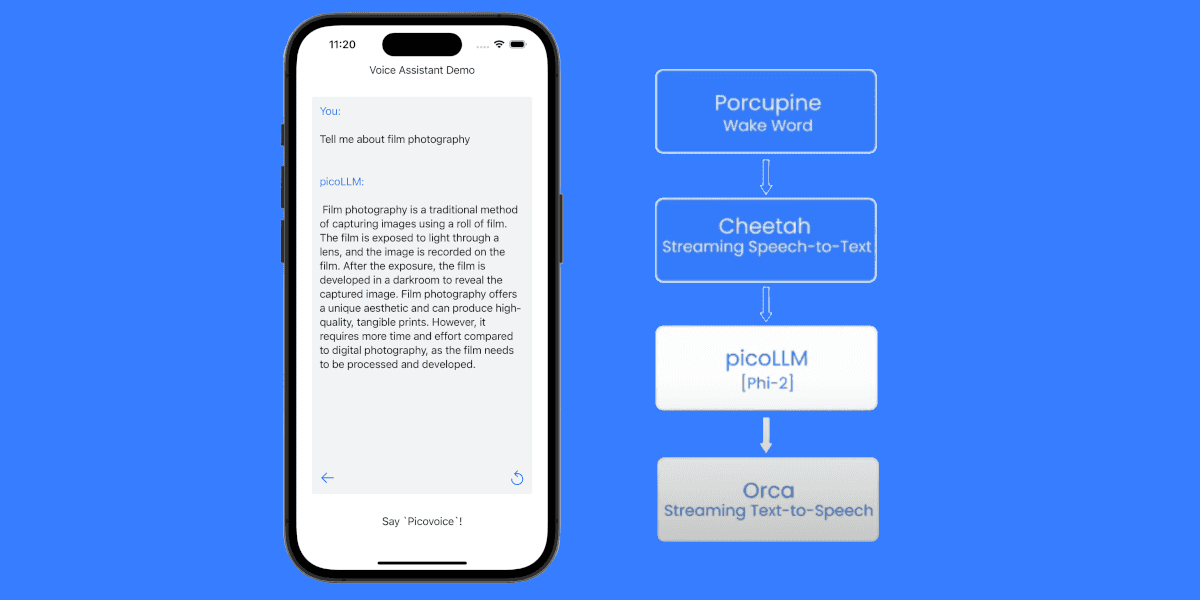

This tutorial shows how to build a Python IVR system for an AI call center that routes customer service queries between intent recognition and LLM reasoning. It uses voice AI models that can run locally on the IVR application server without cloud API dependencies. The implementation consists of Cobra Voice Activity Detection for voice activation and Rhino Speech-to-Intent for intent recognition. For complex queries, it uses Cheetah Streaming Speech-to-Text and picoLLM while responses are generated with Orca Streaming Text-to-Speech.

Picovoice AI models can run on-prem, in the cloud, and on-device across platforms including Linux, macOS, Windows, Android, iOS, and web browsers.

What You'll Build:

A conversational IVR system that:

- Detects caller speech activity to avoid processing silence

- Handles common queries instantly using speech-to-intent recognition

- Routes unrecognized queries to an LLM for reasoning

- Responds with natural speech synthesis

What You'll Need:

- Python 3.9+

- A desktop or laptop with microphone and speakers for testing

- Picovoice

AccessKeyfrom the Picovoice Console

This tutorial focuses on the speech processing and call routing logic. In production, the same pipeline typically runs on an IVR application server (cloud, on-premises, or private infrastructure) that receives audio streams from a telephony system and returns prompts or routing decisions.

Smart IVR Architecture: Intelligent Call Routing with Speech Recognition

The smart IVR system uses the following approach to handle customer queries efficiently:

Voice Activity Detection: Cobra Voice Activity Detection monitors the audio stream and detects when the caller begins speaking. This prevents the system from routing silence or background noise through the speech recognition pipeline.

Intent Recognition: When a customer speaks, Rhino Speech-to-Intent processes the audio directly. If the customer service voicebot recognizes a known intent with required parameters (e.g., "check order status for order 12345"), it responds immediately. This handles the majority of routine customer service queries with minimal latency.

LLM Reasoning: If Rhino returns is_understood=False for ambiguous or complex queries (e.g., "why was I charged twice when I cancelled my order?"), the system prompts the customer to provide more details, then uses Cheetah Streaming Speech-to-Text to transcribe the explanation and routes it to picoLLM for intelligent reasoning.

This AI IVR architecture optimizes for common cases while handling edge cases flexibly.

Create Custom Voice Commands for Customer Service Automation

Rhino requires a context file that defines the specific intents the smart IVR will handle. A context specifies the phrases customers might say and what structured data to extract.

- Sign up for a Picovoice Console account and navigate to the Rhino page.

- Click "Create New Context" and name it

CustomerService. - Click the "Import YAML" button in the top-right corner and paste the following context definition:

- Test the context in the browser using the microphone button.

- Download the

.rhncontext file for your target platform.

For production-ready customer service voicebots, expand the context to cover 10-15 common intents. Rhino's expression syntax supports optional phrases, synonyms, and slot types like numbers and dates. See the Rhino Expression Syntax Cheat Sheet for details.

Set Up a Local LLM

picoLLM runs compressed language models locally in your environment (for example on an IVR application server), so audio and transcripts can be processed without sending data to the cloud. Download a model from the picoLLM Console:

- Sign in to Picovoice Console and navigate to picoLLM.

- Select a model. This tutorial uses

llama-3.2-3b-instruct-505.pllm. - Click "Download" and place it in your project directory.

Set Up the Python Environment

Install the required SDKs:

- Cobra Voice Activity Detection Python SDK

pvcobra, - Rhino Speech-to-Intent Python SDK

pvrhino, - Cheetah Streaming Speech-to-Text Python SDK

pvcheetah, - picoLLM Python SDK

picollm, - Orca Text-to-Speech Python SDK

pvorca, - Picovoice Python Recorder SDK

pvrecorder, - Picovoice Python Speaker SDK

pvspeaker.

Add Voice Activity Detection for Caller Speech Gating

Initialize Cobra Voice Activity Detection and wait until the caller starts speaking:

Implement Real-Time Intent Recognition

The conversational IVR captures audio and processes it through Rhino Speech-to-Intent to detect known intents:

Build Intelligent Call Routing Logic for AI IVR Systems

The intelligent call routing logic determines whether to handle the query with intent recognition or route to picoLLM for reasoning:

Handle Complex Queries with Speech-to-Text

When Rhino Speech-to-Intent doesn't recognize an intent, prompt the customer for more details and use Cheetah Streaming Speech-to-Text to transcribe their explanation:

Add LLM Reasoning for Complex Queries

When Rhino Speech-to-Intent cannot extract a structured intent, picoLLM provides intelligent reasoning while keeping inference local to the IVR application server:

Add Text-to-Speech for Conversational IVR

The conversational IVR converts text responses into natural speech using Orca:

Complete Python Code for Call Center Automation

This complete implementation combines all components into a smart IVR for call center automation:

Run the Smart IVR System

To run the Smart IVR system in Python, update the model paths to match your local files and have your Picovoice AccessKey ready:

The customer service voicebot will greet the caller, process customer queries with intelligent call routing, and respond with natural speech.

Extending the AI Customer Service Voicebot

Connect to Phone Systems:

- Integrate with VoIP platforms like Twilio or Asterisk to handle inbound calls.

Add Multilingual Support:

- Create Speech-to-Intent contexts for multiple languages. Rhino supports multiple languages for intent recognition.

- Orca Streaming Text-to-Speech also supports multiple languages for voice responses.

Database Integration:

- Replace the mock responses in

handle_structured_intent()with actual database queries to retrieve real customer data, order statuses, and account information.

Conversation Analytics:

- Log all transcripts, detected intents, and LLM responses to track common queries, measure resolution rates, and identify areas where the context needs expansion or LLM responses need refinement.

Human Handoff:

- Implement a queue system for the

speakToHumanintent that connects to your existing call center software or creates tickets for callback scheduling.

You can start building your own commercial or non-commercial call center automation projects using Picovoice's self-service Console.

To learn more about the advantages and challenges of voice AI agents in customer service, see: Voice AI Agents in Customer Service.