Speech Intelligence is the capability to automatically capture, interpret, and derive structured meaning from spoken language in real time, transforming raw audio into structured data, actionable insights, and automated decisions. It combines a layered pipeline, from audio capturing and voice activity detection through automatic speech recognition (ASR) and natural language understanding (NLU), going beyond transcription to understand intent, sentiment, and conversational context. Unlike simple transcription, Speech Intelligence enables machines to understand what was said, who said it, how it was said, and what should happen next.

What Is Speech Intelligence?

Speech Intelligence is an AI discipline that transforms spoken audio into business insights and automated actions. It combines several AI layers:

- Automatic speech recognition (ASR): Converts speech to text

- Natural language processing (NLP): Understands meaning and intent

- Speech emotion detection: Detects tone, emotion, and speaker traits

- Automation & Integrations: Triggers workflows and applications

Speech Intelligence doesn't just hear speech; it understands conversations.

Key Components of Speech Intelligence Pipeline

- Audio capture and preprocessing: Most evaluations of

Speech Intelligencefocus on the model layer, such as real-time transcription accuracy, NLU benchmarks, and LLM quality. But the pipeline starts earlier, and every upstream decision affects downstream performance. Before any model touches audio, the recording environment determines the quality ceiling. Microphone selection, voice processor, acoustic treatment, and noise suppression shape everything downstream. - Voice activity detection (VAD): VAD identifies when speech is actually occurring, so the system only processes audio that contains a human voice. This reduces latency, cuts compute cost, and eliminates false triggers. In real-time

Speech Intelligenceapplications, efficient VAD is foundational to responsive performance. - Automatic speech recognition: Streaming transcription processes audio as it's spoken, enabling real-time applications like live agent guidance and instant compliance alerts. Batch transcription processes completed recordings, suited for post-call QA and archival search. The architecture choice here determines which use cases are actually possible.

- Understanding and synthesis: Once speech is transcribed, language models extract structured meaning, customer intent, topic classification, named entities, conversational outcomes, and synthesize higher-order outputs like summaries, coaching prompts, and compliance scores. On-device LLMs extend this capability to environments where cloud transmission is prohibited.

Speech Intelligence vs Speech Analytics

Speech Intelligence and Speech Analytics are often used interchangeably, but represent different capabilities.

Speech Analytics

- Timing: Primarily post-call analysis

- Scope: Contact center focused

- Coverage: Sampling of conversations

- Output: Reporting and dashboards

- Intelligence layer: Historical insights

Speech analytics traditional workflow:

Speech Intelligence

- Timing: Real-time, low-latency analysis

- Scope: Cross-industry and embedded

- Coverage: 100% audio coverage

- Output: Automation and decision systems

- Intelligence layer: Live guidance and predictive AI

The defining trend in the category is the transition from post-processing to live intelligence.

Speech intelligence real-time workflow:

Speech analytics tells what happened. Speech Intelligence enables acting on things as they're happening.

Speech Intelligence Deployment Models: Cloud vs. On-Device

Where the pipeline runs is as much a strategic decision as a technical one.

Cloud-based deployment offers elastic scalability and centralized model updates. The tradeoffs are latency, cost at volume, and data privacy — every audio stream leaves the device and transits external infrastructure.

On-device/on-premise deployment keeps all processing local. Audio never leaves the premises, latency drops significantly compared to remote servers, and the system operates in offline or air-gapped environments. This architecture is required for healthcare (HIPAA), financial services, defense, and any application where data sovereignty is non-negotiable. Picovoice's on-device speech analytics platform is built for exactly these environments.

Hybrid architectures run latency-sensitive, privacy-critical layers on-device while offloading heavier and complex LLM reasoning to cloud infrastructure. This balances performance and cost without compromising on the sensitive data layers.

Speech Intelligence Use Cases

Speech Intelligence enables humans and machines to understand humans better, driving better business decisions and improved customer experience.

- Contact centers: Real-time agent assistance, automated QA and compliance scoring, call summarization, and customer sentiment tracking across 100% of conversations rather than a sampled subset.

- Healthcare and clinical documentation: Ambient clinical documentation during patient encounters, medical dictation, and patient interaction analytics.

- Meetings and collaboration: Live meeting transcription, auto-generated summaries, action item extraction, and knowledge base indexing make spoken knowledge searchable and actionable.

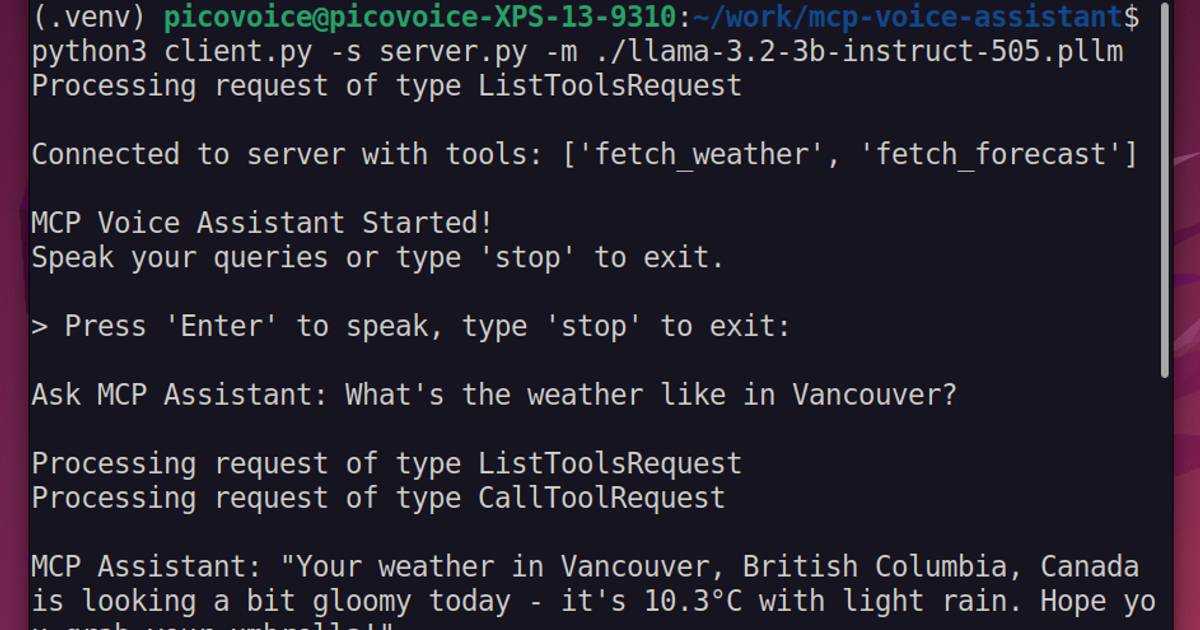

- Embedded and edge voice interfaces: Voice assistants, smart devices, in-vehicle systems, and industrial equipment require always-on, low-latency

Speech Intelligencethat operates without network dependency.

Advantages of Speech Intelligence

- Accelerates Decision-Making: Insights arrive instantly instead of days later.

- Reduces Manual Work: Automates transcription, tagging, summarization, and reporting.

- Improves Customer & Employee Experience: Real-time guidance leads to better outcomes.

- Unlocks New Voice-Driven Products:

Speech Intelligencepowers entirely new application categories.

How to Evaluate a Speech Intelligence Solution

Key technical criteria include:

- Accuracy: Word error rate (WER), punctuation accuracy, accent and language coverage, performance on domain-specific vocabulary

- Latency: Real-time response speed end-to-end across the full pipeline

- Deployment model: On-device, cloud, or hybrid — and whether the vendor supports all three

- Privacy architecture: Where does data go at each stage of the pipeline?

- Component modularity: Can you swap the ASR engine without rebuilding the full stack?

- Customization depth: Domain-specific model training, custom intent grammars

Next Steps

Speech is one of the richest and most underutilized data sources in enterprise environments. Speech Intelligence transforms spoken language into structured data, real-time insights, automated decisions, and new categories of voice-driven products. As organizations adopt voice across products, services, and workflows, Speech Intelligence is becoming foundational AI infrastructure — and the pipeline layer beneath the models is where accuracy, latency, and privacy are actually won or lost.

Talk to a Picovoice expert about building your Speech Intelligence stack.