TLDR: Build on-device voice control for smart TVs in Python. In this tutorial, we show how to add voice AI-powered search to Smart TV using Speech-to-Intent for instant results and on-device voice recognition. Structured voice commands enable fast smart TV content discovery and voice-controlled TV navigation, while open-ended queries route to a local LLM for AI recommendations. All voice processing runs on-device, reducing latency and protecting user privacy.

On-Device Voice Search for Smart TV

Smart TV voice search works best when responses feel instant. Cloud-based pipelines add network latency at each processing stage, which can make the experience feel slower than expected. On-device voice AI processes speech locally, cutting out network round-trips and keeping user data private.

This tutorial shows how to build a smart TV voice assistant in Python. Custom wake words in English and Spanish activate the voice search hands-free, structured content commands route through Speech-to-Intent for instant catalog lookups, and open-ended requests go to a local LLM for AI recommendations, all on-device.

What You'll Build:

A smart TV voice assistant that:

- Activates using "Hey TV" or "Oye TV" for content search, and "Hey Assistant" or "Oye Asistente" for AI recommendations

- Searches the local content catalog instantly for structured queries

- Routes open-ended requests to a local LLM for intelligent content matching

- Responds with natural speech synthesis

With an on-device architecture, the smart TV voice assistant:

- Delivers low-latency responses with all speech processing running locally on the device's hardware.

- Keeps all user audio and viewing preferences on-device, meeting GDPR and CCPA privacy compliance expectations for in-home devices.

What You'll Need:

- Python 3.9+

- Laptop or desktop with microphone and speakers for testing

- Picovoice

AccessKeyfrom the Picovoice Console

Smart TV Voice Search Architecture

This Python-based voice search system uses an on-device voice pipeline designed for instant content discovery and AI recommendations. This pattern is useful for voice-controlled TVs and hands-free content discovery experiences:

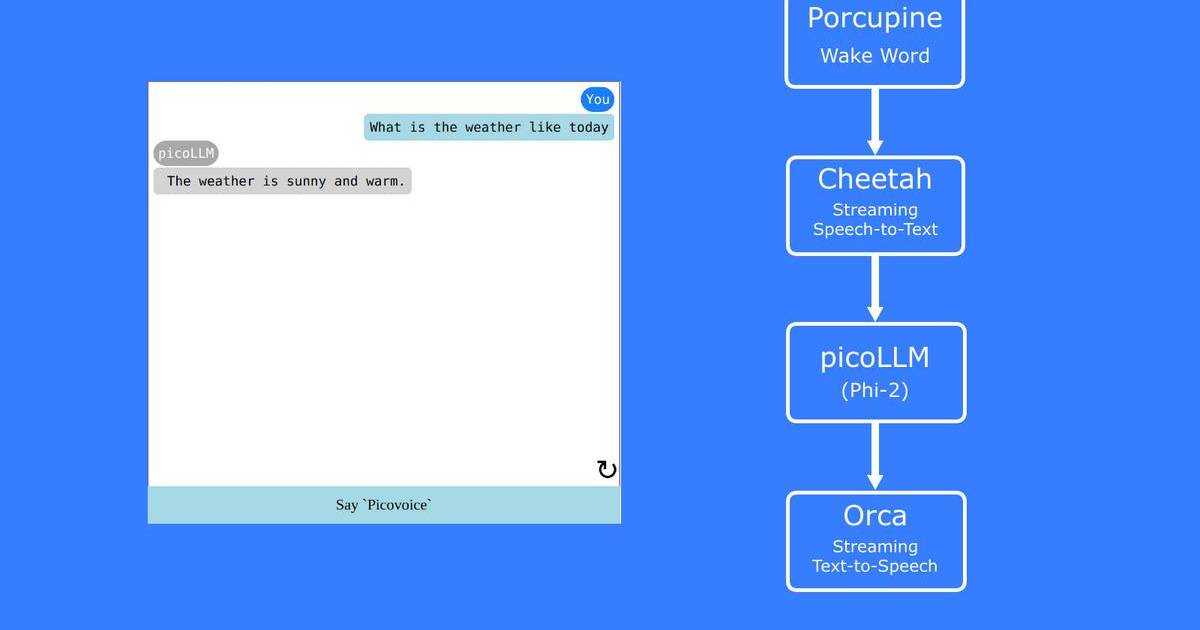

Always-Listening Activation — The voice search system sits in a low-power, idle state using Porcupine Wake Word to monitor the audio stream for four wake phrases across two languages. Detecting "Hey TV" or "Oye TV" routes to instant content search, while "Hey Assistant" or "Oye Asistente" routes to the AI recommendation assistant.

Intent Recognition for Content Search — When "Hey TV" or "Oye TV" is detected, the audio is analyzed by Rhino Speech-to-Intent. Instead of transcribing words one by one, it maps the speech directly to a structured content query — like "search action movies" or "resume watching." The system queries the local content catalog and returns results immediately without further processing.

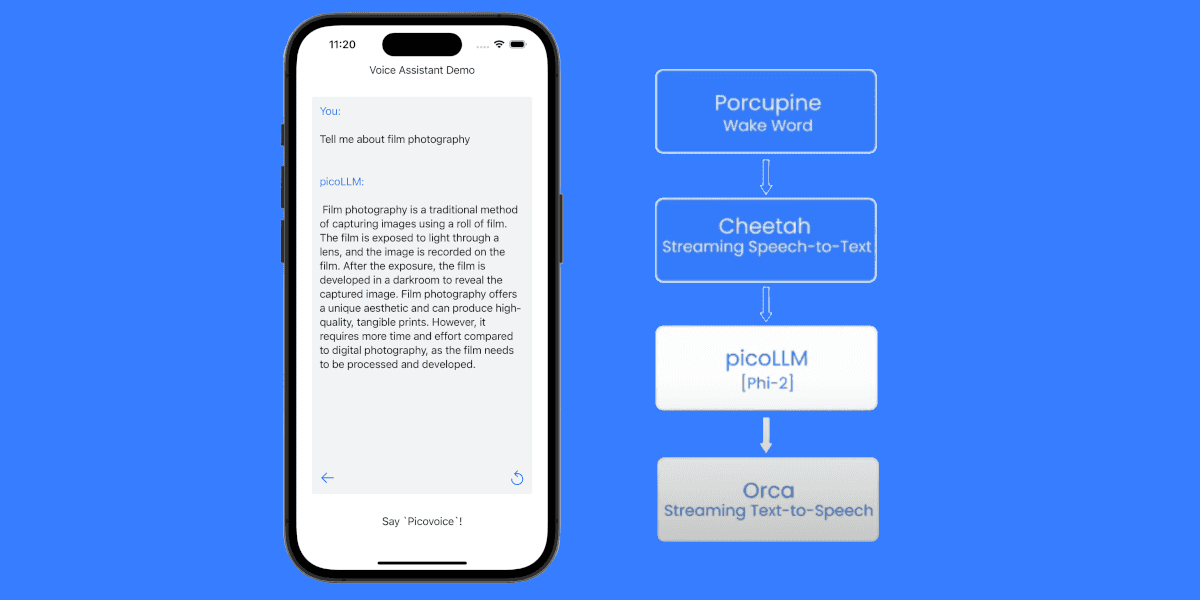

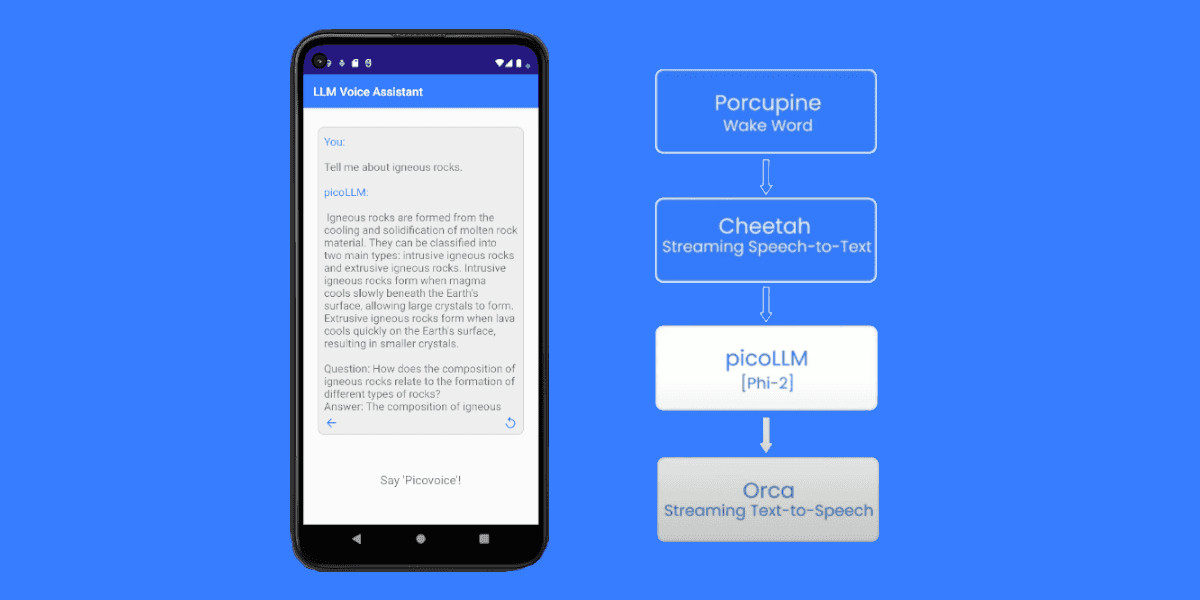

Speech-to-Text for Open-Ended Requests — When "Hey Assistant" or "Oye Asistente" is detected, the system routes directly to Cheetah Streaming Speech-to-Text. This captures free-form requests like "something funny for the whole family" that do not map cleanly to a fixed intent.

On-Device Language Model — The transcribed request is passed to picoLLM along with the device's content catalog. The local language model interprets what the viewer is looking for and matches it against available titles, returning structured recommendations without any cloud processing.

Voice Response Generation — Orca Streaming Text-to-Speech converts the response into natural speech, completing the hands-free loop from query to recommendation.

Content Search Workflow:

AI Recommendation Workflow:

Porcupine Wake Word, Rhino Speech-to-Intent, Cheetah Streaming Speech-to-Text, and Orca Streaming Text-to-Speech support multiple languages including English, Spanish, German and more. Build multilingual voice search to serve international markets by training models in the languages your target regions speak.

Train Custom Wake Words for Smart TV Voice Search

- Sign up for a Picovoice Console account and navigate to the Porcupine page.

- Enter your first wake phrase for content commands (e.g., "Hey TV") and test it using the microphone button.

- Click "Train," select the target platform, and download the

.ppnmodel file. - Repeat steps 2 & 3 to train an additional wake word for AI recommendations (e.g., "Hey Assistant").

- Train Spanish wake words: select "Spanish" as the target language in the console, train "Oye TV" and "Oye Asistente," test them, and download both .ppn model files.

Porcupine can detect multiple wake words simultaneously. For instance, it can support both "Oye Asistente" (Spanish) and "Hey Assistant" (English) at the same time, both routing to the same recommendation voice assistant. For tips on designing an effective wake word, review the choosing a wake word guide.

Define Voice Commands for Content Discovery

- Create an empty Rhino Speech-to-Intent Context.

- Click the "Import YAML" button in the top-right corner of the console and paste the YAML provided below to define intents for structured content search commands.

- Test the model with the microphone button and download the

.rhncontext file for your target platform.

You can refer to the Rhino Syntax Cheat Sheet for more details on custom voice commands by training domain-specific AI models.

Train Custom Voice Commands to Discover TV Content using YAML Context:

This context handles the most common structured content search commands. For open-ended requests like "something relaxing to watch tonight" or "a movie similar to what I watched last night," the assistant will use the picoLLM recommendation path.

Set Up a Local Large Language Model

- Navigate to the picoLLM page in Picovoice Console.

- Select a model. This tutorial uses

llama-3.2-3b-instruct-505.pllm. - Download the

.pllmfile and place it in your project directory.

Install Required Python Libraries for Smart TV Voice Search

Install all required Python SDKs and dependencies using pip:

- Porcupine Wake Word Python SDK:

pvporcupine - Rhino Speech-to-Intent Python SDK:

pvrhino - Cheetah Streaming Speech-to-Text Python SDK:

pvcheetah - picoLLM Python SDK:

picollm - Orca Streaming Text-to-Speech Python SDK:

pvorca - Picovoice Python Recorder library:

pvrecorder - Picovoice Python Speaker library:

pvspeaker

Add Wake Word Detection for Hands-Free Activation

The following code captures audio from your microphone and detects the custom wake word locally:

Porcupine Wake Word processes each audio frame on-device with acoustic models optimized for living room environments. By listening for multiple wake words simultaneously, it routes viewers to the right system path instantly, such as content search or AI recommendations.

Process Content Search Commands

Once the wake word is detected, Rhino Speech-to-Intent listens for structured content queries:

Rhino Speech-to-Intent directly infers intent from speech without requiring a separate transcription step, enabling instant content catalog lookups for structured queries.

Handle User Recommendations with AI

When viewers say "Hey Assistant" or "Oye Asistente," the system routes directly to streaming speech-to-text and local LLM for open-ended content discovery:

This approach uses Cheetah Streaming Speech-to-Text to capture the viewer's open-ended request, then picoLLM to match it against the local content catalog and generate structured recommendations — all without leaving the device.

Add Voice Response Generation for Smart TV

Transform text responses into natural speech for TV playback:

Orca Streaming Text-to-Speech generates natural voice responses with first audio output in under 130ms, providing immediate verbal feedback when a viewer speaks a command.

Route Content Search Commands to Local Catalog

Map structured intents to content catalog queries and format results for voice delivery:

Complete Python Code for Smart TV Voice Search

This implementation combines all components for a smart TV voice search system:

Run the Smart TV Voice Assistant

To run the voice search system, update the model paths to match your local files and have your Picovoice AccessKey ready:

Example Interactions

Content Search:

Content Search (Spanish Activation):

Resume Playback:

AI Recommendation:

AI Recommendation (Spanish Activation):

If you want fully Spanish voice commands (not just Spanish wake words), you can build a Spanish Rhino Speech-to-Intent context and route Spanish commands through the same content search pipeline.

You can start building your own commercial or non-commercial projects using Picovoice's self-service Console.

Start Building