TLDR: This tutorial shows how to build an on-device restaurant voice assistant in Python that handles customer orders, reservations, service requests, and menu questions, all without cloud APIs. Implement four core components: wake word activation, speech-to-intent for order capture, local LLM for menu questions, and text-to-speech for voice responses.

Restaurants need voice ordering systems that are fast, reliable, and predictable, especially during peak service hours. Cloud-based voice stacks introduce network latency that slows down customer interactions and creates awkward pauses during ordering. Traditional speech-to-text and Natural Language Understanding (NLU) pipelines also struggle with complex orders that include modifications, dietary preferences, or substitutions.

This tutorial solves both problems with an on-device voice architecture. For structured voice commands like orders and reservations, speech-to-intent maps voice directly to JSON without any intermediate transcription step.

For open-ended questions about menu items, allergens, or recommendations, streaming speech-to-text feeds a local large language model (LLM) that handles unpredictable phrasing.

Both paths run entirely on-device with voice feedback via text-to-speech.

This guide demonstrates how to build a restaurant voice agent with:

- Wake word activation for hands-free operation

- Speech-to-intent for restaurant orders, modifications, reservations, and service requests

- Structured JSON output for POS and reservation system integration

- Streaming Speech-to-Text and Local LLM for menu questions, dietary inquiries, and recommendations

- Complete voice feedback loop with streaming text-to-speech for order confirmation

All processing runs locally, enabling low latency, privacy compliant speech recognition, and full control over customer voice data.

Prerequisites:

- Python 3.9+

- A laptop or computer with microphone and speakers for testing

- Picovoice

AccessKeyfrom the Picovoice Console

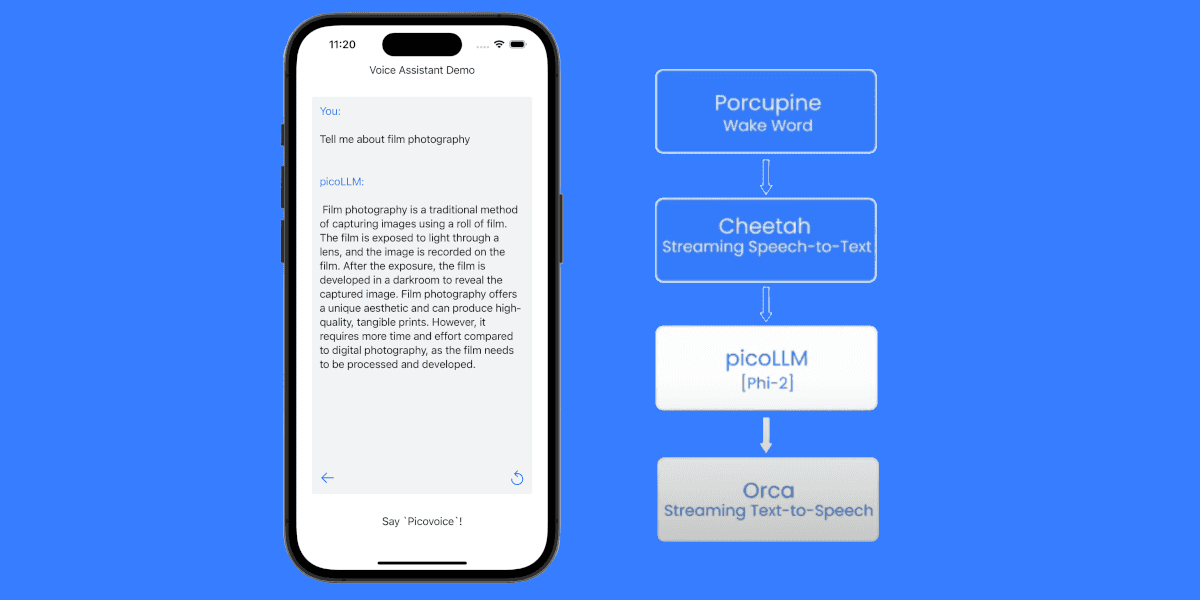

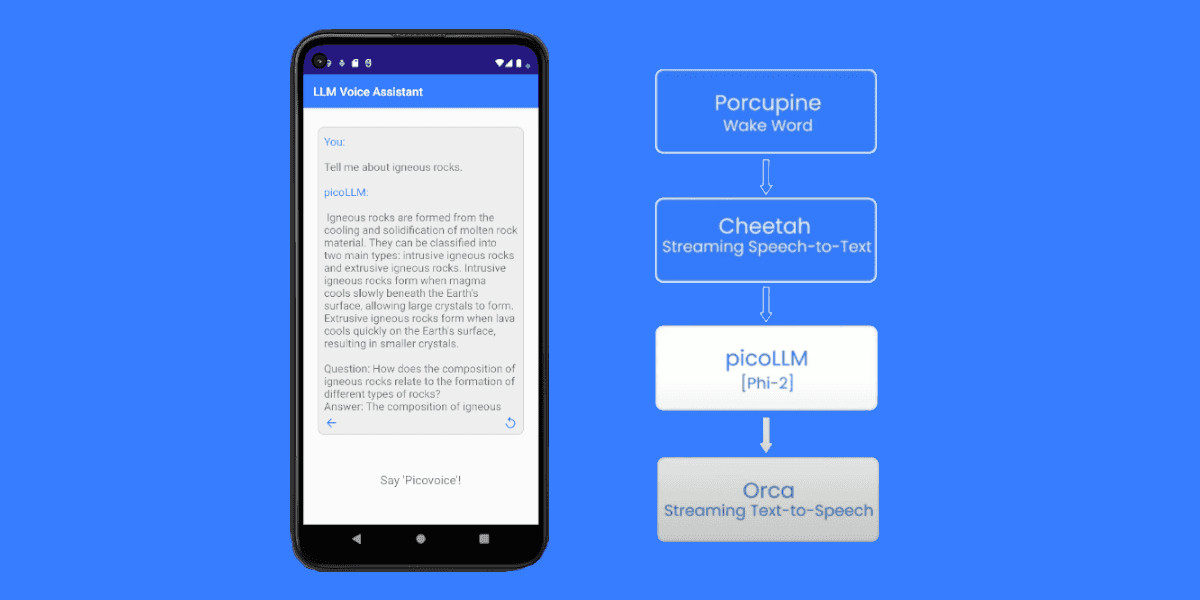

Voice AI Architecture for Restaurant Voice Agent

The restaurant voice agent architecture combines deterministic speech-to-intent for structured orders with generative AI for conversational menu inquiries. This provides both the reliability of rule-based systems and the flexibility of LLM-powered interactions.

Porcupine Wake Word for Voice Activation: Listens continuously for wake phrases and routes to different processing paths, e.g., "Hey Restaurant" for orders, "Hey Menu" for questions.

Rhino Speech-to-Intent for Structured Commands: Maps voice directly to structured intents without intermediate transcription. Produces POS-ready JSON in a single inference step, avoiding the error propagation from Speech-to-Text + Natural Language Understanding pipelines.

Cheetah Streaming Speech-to-Text for Open-Ended Questions: Transcribes menu inquiries in real-time for the LLM path.

Cheetahdelivers cloud-competitive accuracy with sub-second latency.picoLLM for Local LLM Inference: Generates natural responses to questions about ingredients, allergens, or recommendations — handling unpredictable phrasing.

Orca Streaming Text-to-Speech for Voice Responses: Converts all responses to natural speech.

Orcacan begin audio output while the LLM is still generating text, minimizing perceived latency.

All Picovoice voice processing models support multiple languages including English, Spanish, French, German, and more.

Order Placement Workflow:

Menu Inquiry Workflow:

Looking for QSR drive-thru voice ordering solutions? See Challenges of QSR Drive-Thrus and Voice AI.

Train Custom Wake Words for Restaurant Voice Assistant

- Sign up for a Picovoice Console account and navigate to the Porcupine page.

- Enter your wake phrase for ordering (e.g., "Hey Restaurant") and test it using the microphone button.

- Click "Train," select the target platform, and download the

.ppnmodel file. - Repeat Steps 2 & 3 to train an additional wake word for menu queries (e.g., "Hey Menu").

Porcupine Wake Word can detect multiple wake words simultaneously. For instance, it can support both "Hey Restaurant" for placing orders and "Hey Menu" for menu questions. For tips on designing an effective wake word, review the choosing a wake word guide.

Define Voice Commands for Orders, Reservations, and Service

- Create an empty context for speech-to-intent processing.

- Click the "Import YAML" button in the top-right corner of the console and paste the YAML provided below to define intents for customer ordering.

- Test the model with the microphone button and download the

.rhncontext file for your target platform.

You can refer to the Rhino Syntax Cheat Sheet for more details on building custom contexts.

YAML Context for Customer Order Commands:

This context handles:

- Customer ordering,

- Order modifications,

- Reservations,

- Service requests.

For menu questions, dietary inquiries, or recommendations, customers say "Hey Menu" to route to the conversational AI path.

Set Up Local LLM for Menu and Dietary Questions

- Navigate to the picoLLM page in Picovoice Console.

- Select a model. This tutorial uses

llama-3.2-1b-instruct-505.pllm. - Download the

.pllmfile and place it in your project directory.

Install Python SDKs for Restaurant Voice AI

The following Python SDKs provide the complete restaurant voice AI stack for hands-free operations. Install all required Python SDKs and dependencies using pip:

- Wake word detection:

pvporcupine - Speech-to-intent processing:

pvrhino - Streaming speech-to-text:

pvcheetah - Local LLM inference:

picollm - Text-to-speech synthesis:

pvorca - Audio input/output:

pvrecorder,pvspeaker

Add Hands-Free Voice Activation

The following code captures audio from your microphone and detects the custom wake words locally:

Wake word processing happens on-device, triggering the rest of the pipeline when the wake phrase is recognized. This enables hands-free activation ideal for kiosks, counter service, and dine-in table ordering.

Add Voice Command Recognition to Process Customer Orders

Once the wake word is detected, speech-to-intent processing listens for structured customer orders:

The process_order function is defined in the POS integration section below.

Handle Menu and Dietary Questions with Local LLM

For menu inquiries, the system routes to streaming speech-to-text and local LLM for natural language processing:

This approach uses streaming speech-to-text to transcribe natural speech, then a local LLM to understand the query and generate an appropriate response based on menu information.

Generate Voice Responses with Text-to-Speech

Transform text responses into natural speech:

Streaming text-to-speech generates natural voice responses, providing clear order confirmations and menu information.

Integrate Voice Stack with POS and Restaurant Management Systems

Route customer orders from structured JSON to restaurant systems:

Complete Python Code for Restaurant Voice Assistant

This implementation combines all components for a complete restaurant voice assistant:

Run the Restaurant Voice Assistant

To run the restaurant voice assistant, update the model paths to match your local files and have your Picovoice AccessKey ready:

Example interactions demonstrating the hybrid architecture:

The hybrid architecture ensures structured commands process instantly while open-ended questions receive natural, informative responses.

You can start building your own commercial or non-commercial projects using Picovoice's self-service Console.

Start Building