Running large language models (LLMs) on Android mobile devices presents a unique set of challenges and opportunities. Mobile devices are constrained by limited computational power, memory, and battery life, making it difficult to reasonably run popular AI models such as Microsoft's Phi-2 and Google's Gemma. However, the emergence of model compression and hardware-accelerated inference is transforming this landscape. picoLLM offers a variety of hyper-compressed, open-weight models that can be run on-device using the picoLLM Inference Engine. By enabling local AI inference, picoLLM enhances user privacy, reduces latency, and ensures more stable access to AI-powered applications. These benefits make picoLLM an ideal solution for users seeking robust AI capabilities without depending on the cloud.

The picoLLM Inference Engine is a cross-platform library that supports Linux, macOS, Windows, Raspberry Pi, Android, iOS and Web Browsers. picoLLM has SDKs for Python, Node.js, Android, iOS, and JavaScript.

The following guide will walk you through all the steps required to run a local LLM on an Android device. For this guide, we're going to use the picoLLM Chat app as a jumping-off point.

Setup

Install Android Studio.

Clone the

picoLLMrepository from GitHub:

- Connect an Android device in dev mode or launch an Android simulator.

Running the Chat App

- Open the picoLLM Chat app with Android Studio.

- Go to Picovoice Console to download a

picoLLMmodel file (.pllm) and retrieve yourAccessKey. - Upload the

.pllmfile to your device using Android Studio's Device Explorer or usingadb push:

Replace the value of

${YOUR_ACCESS_KEY_HERE}in MainActivity.java with your PicovoiceAccessKey.Build and run the demo on the connected the device.

The app will prompt you to load a

.pllmfile. Browse to where you uploaded the model file (ensureLarge filestoggle is on) and select the file.Enter a text prompt to begin a chat with the LLM.

Integrating into your App

- Ensure

mavenCentral()repository has been added to the top-levelbuild.gradle:

- Add

picollm-androidto as a dependency in your app'sbuild.gradle:

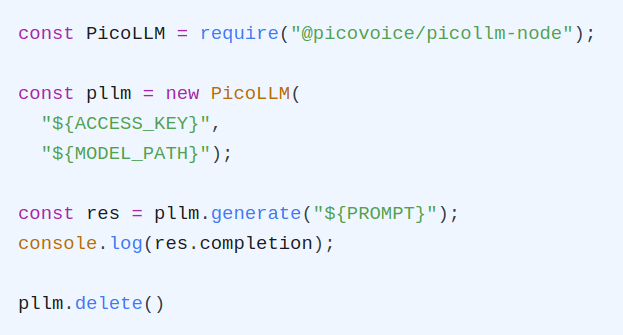

- Create an instance of the engine:

- Pass in a text prompt to generate an LLM completion:

- When done, be sure to release the resources explicitly: