Offline Voice AI in a browser may seem contradictory. Doesn't using a web browser mean you’re online? But even if connected to the Internet, offline voice recognition in-browser means eliminating variable latency and intrinsic privacy. Local voice recognition also unlocks always-listening behaviour that is impractical to perform continuously with cloud-based services.

Try It

Here is a demonstration application that uses the Porcupine wake word engine and the Rhino Speech-to-Intent engines to control lights in a home. All speech recognition is private, offline, and in-browser.

What's under the Hood?

Offline web voice AI is challenging. We had to extend our in-house deep learning framework to run on WebAssembly with SIMD support. These models run in the background using Web Workers. Together with the Web Audio API, these provided the foundation for accessing microphone data in the browser and processing it. We have abstracted these cutting-edge web technologies in Picovoice SDKs for the Web.

Start Building

- Porcupine Wake Word: Wake Word Detection, Keyword Spotting, Voice Activation, and Always listening Voice Commands

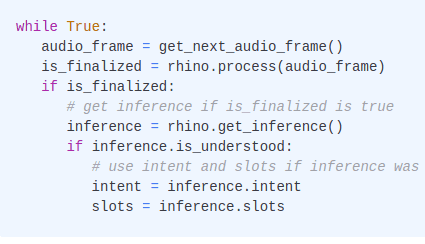

- Rhino Speech-to-Intent: Voice Commands, Domain-Specific Natural Language Understanding, NLU, Spoken Language Understanding, and SLU

- Leopard Speech-to-Text: Speech-to-Text, STT, Automatic Speech Recognition, ASR, Large-Vocabulary Speech Recognition, and Open-Domain Transcription

- Cobra Voice Activity Detection: Voice Activity Detection and VAD

Open-Source Demos

Below are demos (Vanilla) JavaScript and React. These are minimal applications that show how to integrate the Picovoice SDKs into web applications.

How do I create custom wake words or speech-to-intent contexts for the web?

You can use the Picovoice Console to create wake words and design and train speech to intent contexts.