Developers face a myriad of choices when building voice products. Although the end results are similar, different approaches carry significant implications for accuracy, responsiveness, and efficiency.

A common misconception is that adding any voice feature requires a speech-to-text (STT) component. That is not true. There are many common use cases in which using STT actually results in a suboptimal solution. Relying on generic STT requires a few orders of magnitude more resources to recognize a meaningfully large lexicon compared to specialized solutions. Additionally, contextual domain information is lost in translation, which reduces accuracy.

Below are a few common voice design patterns and our recommendations for when to use each approach.

Voice Activation & Always-Listening Commands

Keyword spotting — detecting a key phrase within a stream of audio—is a simple yet powerful form of voice recognition. Voice activation or wake word detection (e.g. “OK Google”) is a special form of keyword spotting.

A keyword spotter can also be used to create always-listening voice commands. The main benefit of using always-listening voice commands (versus follow-on commands) is user convenience, as it is not required to utter the wake phrase first. For example, a music player can immediately adjust the volume via always listening commands such as “volume up” or move within a playlist using “play next”.

Matching the stream of STT output text against a list of phrases or keywords would result in abysmally low accuracy while using an unnecessary amount of computing resources. For power-sensitive applications like wearable devices, the power requirements alone are a complete non-starter.

Picovoice’s wake word engine Porcupine incurs minimum latency and achieves outstanding accuracy while requiring minimal compute resources. It is so efficient that it can run on a low-power microcontroller while detecting dozens of phrases concurrently. Hence, it’s a perfect solution for recognizing a set of fixed phrases (both wake words and immediate commands).

NLU within a Specific Domain

A common requirement in Voice User Interface (VUI) design involves understanding complex naturally-spoken commands within a given domain of interest. For example:

“Make me a matcha latte with almond milk”

This cannot be addressed via keyword spotting, as the number of possible permutations of phrases exponentially grows with the complexity of the domain. To keep commands natural and flexible, the types of phrases to accommodate would also increase dramatically.

Classic Natural Language Understanding (NLU) solutions require two steps. First, speech is converted into text by an STT engine. Second, the user intent is extracted from the transcribed text using an NLU engine. This two-step approach requires significant compute resources and it gives suboptimal accuracy: errors introduced by STT impair NLU performance (e.g. transcribing “matcha” as “much a”).

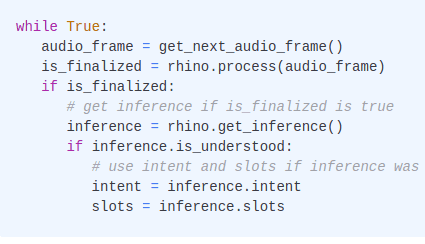

Picovoice’s Speech-to-Intent engine Rhino takes advantage of the context and creates a jointly-optimized inference engine. The result is an end-to-end model that outperforms alternatives in accuracy and runtime efficiency. The output of the Speech-to-Intent engine is the user’s intent as structured data:

Picovoice’s Speech-to-Intent is the only NLU engine that is efficient enough to run even on power-efficient, low-cost microcontrollers, recognizing a lexicon of hundreds of words and many complex commands in real-time.

Voice Search

Suppose we wish to search a large body of speech data, such as meetings, lectures, or call center recordings. A common approach is to convert the speech to text and then make the resulting corpus searchable via known text indexing algorithms.

This approach suffers from the errors produced by STT which will cause portions of the data to be absent from search results. This is especially pronounced for proper nouns such as names, brands, and products, as STT engines typically struggle with such specialized vocabulary.

Picovoice’s Speech-to-Index resolves this shortcoming by performing phonetic (acoustic) indexing efficiently and searching directly on indexed sounds—instead of text. Hence, it makes sounds searchable. In addition, the Picovoice acoustic indexing process is a few orders of magnitude faster than speech-to-text conversion.

Open-domain Large Vocabulary Speech Recognition

An STT is required when a voice use case is not limited to a given domain or confined within a fixed set of commands. A familiar example is question-answering when the user can ask the voice assistant anything. Furthermore, STT is particularly useful when there is an inherent interest in capturing the transcription, such as meetings, note-taking, and voice typing.

Picovoice offers two variants of STT: file-based Leopard and streaming Cheetah. The streaming STT engine outputs text in real-time with minimal delay for visual feedback. This can improve the user experience in voice typing applications. The file-based engine has higher accuracy, though live visual feedback is not provided. Both engines can run on-device in real-time without an internet connection, even on commodity microprocessors such as Raspberry Pi.

Hybrid Use-Case Scenarios

Oftentimes, a mix of the aforementioned products needs to be used to provide a complete voice solution. For example, VR headsets can use a keyword spotter to provide hands-free triggers. It can use Speech-to-Intent to navigate through the menu. An STT engine can be used for note-taking and messaging, while speech-to-index can be used to search through voicemail.