Monica Lam is a Professor in the Computer Science department at Stanford University since 1988. She holds a B.Sc. from the University of British Columbia, and a Ph.D. from Carnegie Mellon University. She is the faculty director of the Open Virtual Assistant Lab (OVAL) which builds Genie, the open-source virtual assistant.

Professor Lam and Picovoice met through a shared passion: enabling private voice interactions and making voice technology available to all, not exclusive to Big Tech.

[Q1]: How did voice assistants get your interest? Why is voice important?

Voice is the human-to-human interface. Now that computers can finally communicate with humans using human language, this makes digital information accessible to everybody – that includes the blind, the preliterate, and the illiterate. Voice assistants today are a gateway to many available voice interfaces. They will continue to grow to become personal and will be able to give advice and recommendations to the user. This is an extremely rich research topic, and the results can make a huge impact on society. I want to advance and democratize voice technology, which includes covering low-resource languages.

[Q2]: Thinking of problems that you tackled throughout your career, how was your experience with speech recognition?

The problem of recognizing speech is by and large solved. The challenging problem now is natural language understanding – how do we understand the meaning of what users are saying or typing. This requires machine learning. That normally means that we need tons of training data. The typical process is that we find out what users would say or ask for, then we need to annotate the meaning of those sentences, which needs to be done by an expert. There is so much variety in what people ask for and how they express it, this takes a lot of data and is therefore prohibitively expensive to acquire. Due to this expensive process, not everybody was able to afford it. However, with the advancements in technology, it's possible to reduce the magnitude of data required. Our research shows that it is possible to reduce the amount of training data needed by two orders of magnitude by synthesizing most of the data with the help of large language models. Yet, it requires domain expertise which again not everybody has. This is why we’ve initiated WWvW (World Wide Voice Web) and I appreciate Picovoice’s support and work to make it available to anyone.

[Q3]: Where do you see the voice technology going?

I think that all applications in the future will be multimodal with a voice component. Today there are over 20 million web developers, I think we will have 20 million voice interface developers in the future. This interface is useful across the board for many different applications. What excites me the most is the idea that in the future we will have an assistant on our ears at all times via earbuds. We won’t need to have to pull out a phone to get access to digital information.

[Q4]: The voice market is dominated by Big Tech, what are the risks of such dominance?

The worldwide web is an open platform where companies can freely put up information for all to see. If the voice market ends up being controlled by just a couple of companies, many businesses will be hurt, which eventually will hurt the consumers. Moreover, virtual assistants are in the position of collecting a massive amount of personal information about the users. Leaving all personal information in the hands of a couple of companies would give them too much power over the consumers. It is thus a huge risk to privacy as well.

[Q5]: Why is it risky to prioritize convenience and accuracy over privacy?

Consumers today assume that privacy is a price that needs to be paid if they want convenience. They do not know that it is technically possible to have both, because Big Tech does not offer both.

People want convenience, tech has made so many things convenient. Privacy is important too - it is the huge aggregation of personal profiles in social media that has made possible many misinformation campaigns.

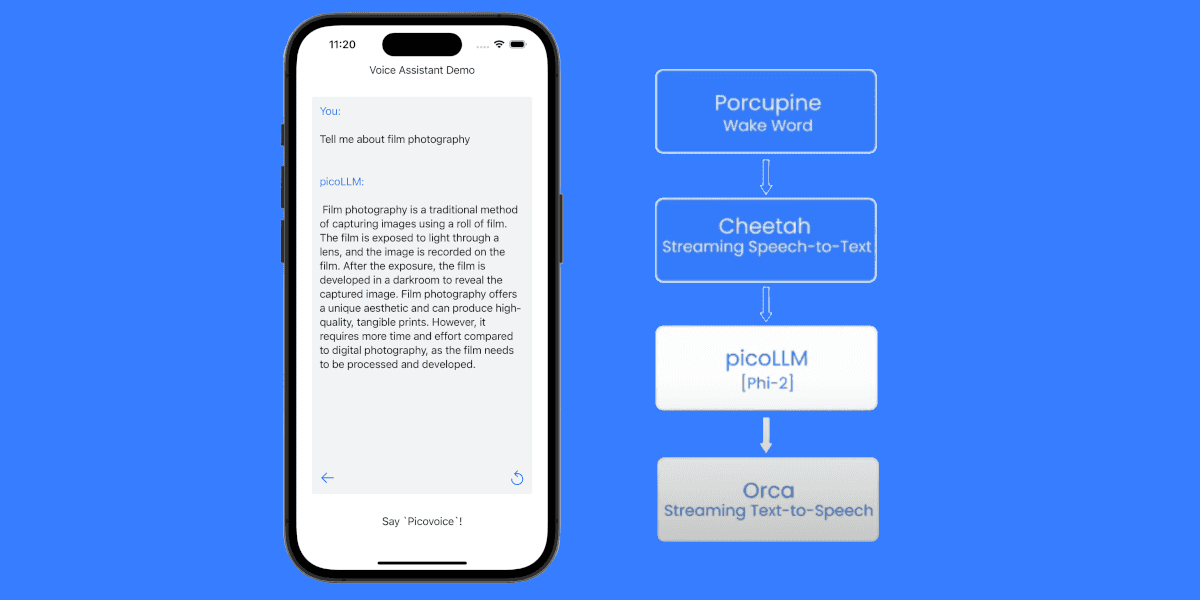

[Q6]: Can you tell us a bit about Genie, the voice assistant? Why did you start it?

Genie is an open-source privacy-preserving virtual assistant that we have developed in our Stanford Open Virtual Assistant Lab. It is the first assistant that is built to support conversations, rather than just simple commands. It performs many of the most popular skills such as playing songs, news, podcasts, restaurant recommendations, weather, etc. It can also be used to control IoT devices, through our collaboration with Home Assistant, an open-source home gateway company. Unlike commercial assistants, all the IoT data do not leave your house and are therefore private. What is more significant is that the assistant demonstrates how our open-source Genie toolset makes it possible for a small team to create an assistant. I started this project because we want to advance voice technology and make it publicly available so every company can create voice interfaces easily.

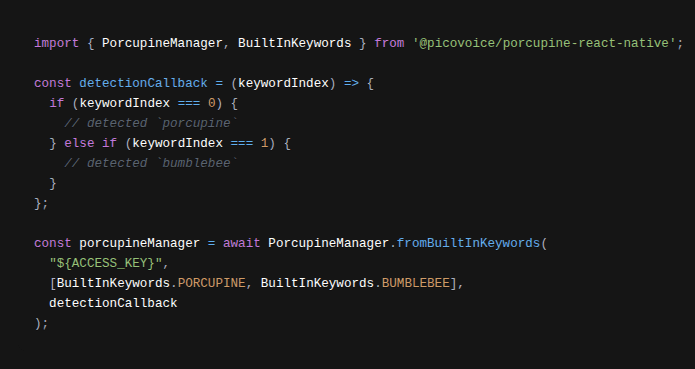

[Q7]: Hey Genie is powered by Picovoice Porcupine. Why did you choose Picovoice?

Picovoice is a leader in the field of wake words. We are very impressed with how easy it is to get a wake word and how well it performs. We have tried other alternatives but they do not perform as well.

[Q8]: Why is it difficult to build a wake word engine that works across platforms?

It is hard because we need to be able to detect how different people would pronounce the wake word and it has to be processed on-device. This normally needs a lot of data and the model needs to be small enough to fit on the device. It’s not affordable for every organization to record hundreds of people to train a wake word and optimize the trained model for the target platform. Picovoice solves this problem by enabling instant training on its web console.

[Q9]: Voice AI market is known for having limited platform support, yet Picovoice models run from single board computers to web browsers. Why is it important?

I’m excited that Picovoice is one of those rare enterprises with a vision that we share. There are so many voice interfaces on so many platforms that we need an easy-to-use Picovoice Console that works for a large variety of platforms. It is this shared vision that makes Picovoice a great partner for our project.

Try Picovoice Console by yourself

[Q10]: Lastly, what do you think Picovoice should do or focus on next?

Echo cancellation! It is an important component of a voice interface.