The industry practice is to embed Speaker Diarization into commercial speech-to-text systems. Developers generally use the default Speaker Diarization, the subcomponent of speech-to-text engines. Using a different Speaker Diarization mostly means using two speech-to-text APIs, doubling the cost. Hence, developers rarely use cloud speech-to-text APIs solely for Speaker Diarization.

Most developers explore build and FOSS (Free and Open-Source Software) options when they need standalone Speaker Diarization. The build option is generally a dead end when it’s not a part of the core business. Thus, free and open-source is the most common path. Open-source models are free, yet they may require significant internal resources, which could cost even more than running two expensive cloud APIs.

Top Free & Open-source Speaker Diarization APIs and SDKs

The most popular Free and Open-source speaker diarization libraries are Pyannote, NVIDIA NeMO, Kaldi, SpeechBrain, and UIS-RNN by Google. Each comes with pros and cons, requiring thorough research to find the best one that addresses developers' needs.

Pyannote:

Pyannote-audio, mostly known as pyannote, started as a research project led by Hervé Bredin. Later, it became one of the most popular Speaker Diarization projects, with 3.5K stars on GitHub. Pyannote is written in Python using the PyTorch Machine Learning framework. Pyannote supports only Python 3.8+ on Linux and MacOS, limiting developers' ability to build cross-platform solutions. Pyannote-audio comes with a set of pre-trained models, simplifying development. Initial models are trained on VoxCeleb, which consists of clean conversations of celebrities extracted from YouTube. Thus, developers might need to retrain the models to handle real-life use cases such as phone calls or meetings.

NVIDIA NeMO:

NVIDIA NeMo toolkit has a separate component for Speaker Diarization, which can be used with NVIDIA NeMo ASR or with another speech-to-text solution. Compared to the libraries created and maintained by individuals, it is more likely that NVIDIA will continue maintaining NVIDIA NeMO given its dependency on NVIDIA GPUs, hence the commercial interest of NVIDIA. Although NVIDIA doesn’t offer direct support, there is an active NeMo community on GitHub.

Kaldi:

Kaldi is one of the most famous open-source automatic speech recognition software. (In the pre-Whisper era, Kaldi was the one, and it is still prominent among researchers.) Kaldi allows developers to train the models from scratch or use pre-trained X-Vectors network or PLDA backend models. Using Kaldi for Speaker Diarization can be challenging for beginners or those looking for a quick implementation. Similar to other open-source projects, Kaldi doesn’t offer enterprise support. Yet, it has an active community on GitHub to tackle issues and bugs.

SpeechBrain:

SpeechBrain is another Speaker Diarization research project that became a popular open-source toolkit. Dr. Mirco Ravanelli and Dr. Titouan Parcollet have created SpeechBrain using the PyTorch Machine Learning framework and grown with the support of corporate sponsors. Similar to pyannote, SpeechBrain only supports Python 3.7+ on Linux and MacOS. SpeechBrain provides different Speaker Diarization models based on ECAPA-TDNN and Xvectors inspired by Kaldi. Like others, SpeechBrain does not offer dedicated support but has a community on GitHub to address issues and bugs.

UIS-RNN by Google:

UIS-RNN (Unbounded Interleaved-State Recurrent Neural Network) is a fully supervised Speaker Diarization algorithm by Google. There is no pre-trained model available. Google does not share the data, code, or model as they heavily depend on Google's internal infrastructure and proprietary data. Developers need to train a model from scratch using their own data. Training and prediction require a GPU.

Top Commercial Speaker Diarization APIs and SDKs:

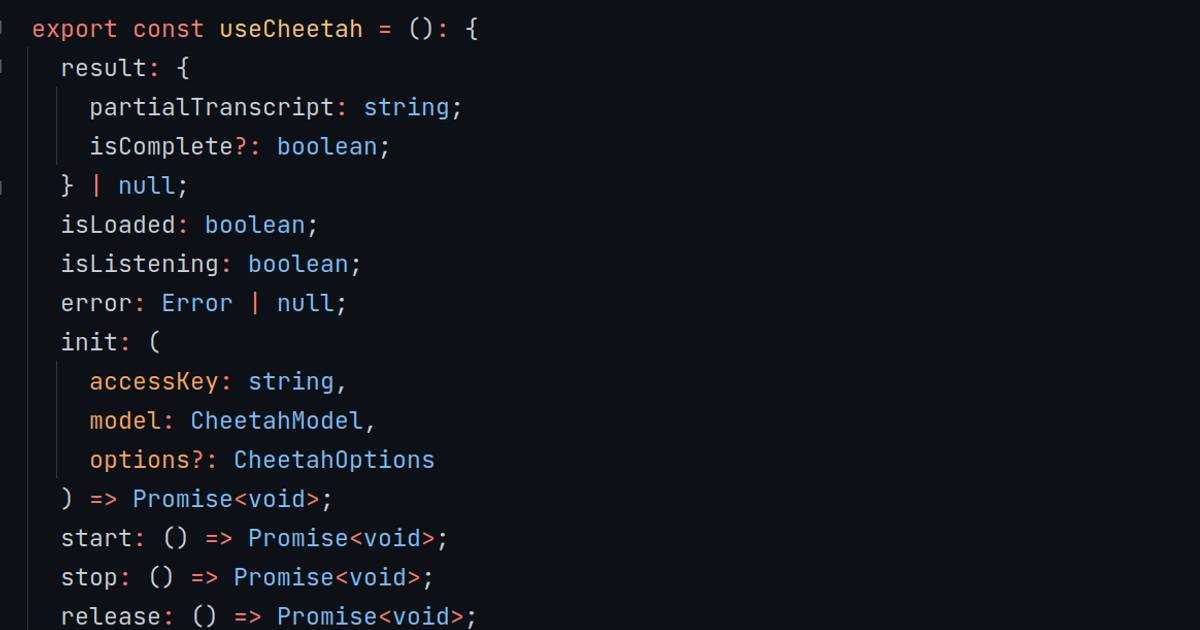

Most speech-to-text API providers offer Speaker Diarization as a subcomponent of their speech-to-text. Similarly, Picovoice offers Speaker Diarization with Leopard Speech-to-Text, too. Yet, Picovoice also offers a standalone engine: Falcon Speaker Diarization.

Falcon Speaker Diarization by Picovoice:

Falcon is a highly accurate, efficient, and modular on-device Speaker Diarization engine powered by deep learning. Falcon:

- Works with any transcription engine, including Whisper.

- Identifies an uncapped number of speakers without input.

- Recognizes speakers even in multilingual settings.

Moreover, Falcon is 100x more efficient than the most known open-source alternative pyannote and 5x more accurate than the speaker diarization capability of Google Speech-to-Text as proven by an open-source speaker diarization benchmark.