In today's technological landscape, large language models (LLMs) have become integral components in modern software, and demand for them is only increasing. Cloud-based LLMs dominate this space, with companies like OpenAI leading the charge. OpenAI's ChatGPT, especially with its new GPT-4o model, has set the standard for conversational AI, being widely adopted by the public. Since ChatGPT hit the scene, it seems as though every tech giant has released a generative AI to use alongside their products (e.g., Google's Gemini, Meta's Llama3, and Microsoft's Copilot). Despite their widespread use and impressive capabilities, cloud-based LLMs come with some notable downsides, including concerns about data privacy, latency issues, and the recurring costs associated with API usage.

The Power of Local LLMs

Local LLMs—AI models that run directly on consumer devices—are gaining traction as viable alternatives to cloud-based providers. This approach solves many of the issues created by using cloud-based providers but introduces new obstacles. Running LLMs locally requires substantial computational resources and expertise in model optimization and deployment. Additionally, local models may not always match the performance of their cloud-based counterparts due to losses in accuracy from LLM model compression. Llama.cpp, a popular open-source local LLM framework, has been a de facto solution in this space. This framework has done wonders for the enthusiastic hobbyist, but has not been fully embraced by developers or enterprises due to its complex setup, significant size vs. accuracy trade-offs, and minimal platform support.

picoLLM aims to address all the issues of its online and offline LLM predecessors with its novel x-bit LLM quantization and cross-platform local LLM inference engine. Offering hyper-compressed versions of Llama3, Gemini, Phi-2, Mixtral, and Mistral, picoLLM enables developers to deploy these popular open-weight models on nearly any consumer device. Now, let's see what it takes to run a local LLM on a basic Windows machine!

The picoLLM Inference Engine is a cross-platform library that supports Windows, macOS, Linux, Raspberry Pi, Android, iOS and Web Browsers. picoLLM has SDKs for Python, Node.js, Android, iOS, and JavaScript.

The following tutorial will walk you through all the steps required to run a local LLM on even a modest Windows machine.

Setup

Install Python.

Install the

picollmusingpip:

- Go to Picovoice Console, retrieve your

AccessKey, and choose apicoLLMmodel file (.pllm) to download.

Writing a Simple LLM Script in Python

To get started we'll need to create a new Python script. Open it up in the editor of your choice, import the picollm package and initialize the inference engine using your AccessKey and the path to your downloaded .pllm model file.

With the inference engine initialized, we can now use it to generate a basic prompt completion and print it to the console:

It will take a few seconds to generate the full completion. If we want to stream the results, we can use the stream_callback parameter:

Once we're done with the engine, we need to explicitly delete it:

To see additional optional parameters for generation and a selection of chat dialog templates for different models, check out the API documentation.

Now Do It in Node.js!

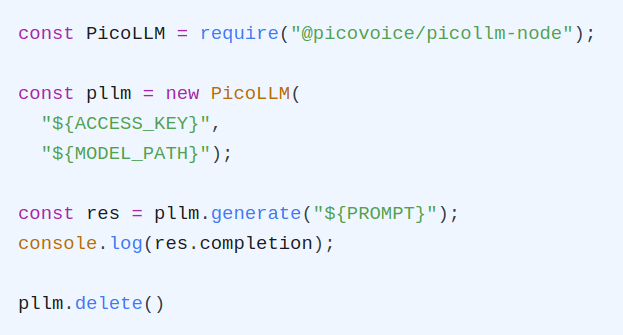

Let's see what the same code looks like in Node.js:

The Node.js API documentation details additional options for generation as well as chat templates that can be used to have back-and-forth chats with certain models.

Additional Resources

There are several demos available on the picoLLM GitHub repository.

For Python and Node.js, there are two demos available: completion and chat. The completion demo accepts a prompt and a set of optional parameters and generates a single completion. It can run all models, whether instruction-tuned or not. The chat demo can run instruction-tuned (chat) models. The chat demo enables a back-and-forth conversation with the LLM, similar to ChatGPT.