Raspberry Pis are small, affordable, and versatile computers used for various projects, including automotive and smart devices. However, the limited resources of a Raspberry Pi pose a challenge when developing AI assistants that rely on large language models (LLMs).

There are two primary approaches to building an AI assistant on a Raspberry Pi: cloud-based and on-device solutions.

Cloud-based solutions use remote servers for processing, raising privacy concerns due to data transmission and storage.

On-device solutions run the AI assistant directly on the device, addressing privacy but demanding significant

computational resources.

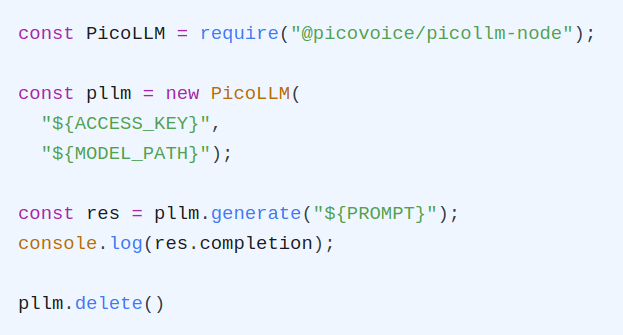

Picovoice's picoLLM presents a solution to the challenges faced by on-device AI assistants. With its advanced quantization algorithm, developers can run a variety of LLM models directly on devices such as Raspberry Pi. The picoLLM quantization algorithm yields a smaller model size, reduced memory usage, and even faster inference speed, all while maintaining accuracy; more on this later.

Setup

To set up your offline AI assistant, install the picollmdemo package on your device using the following command:

This package includes two demos: picollm_demo_completion (for single-response tasks) and picollm_demo_chat (for

interactive conversations). We will be using the picollm_demo_chat demo to create our offline assistant.

Running the Demo

To see all the available options for the demo and customize the text generation process to your liking, run this command:

To start the demo with the simplest configuration, you'll need the following:

- Your Picovoice Access Key (

--access_key $ACCESS_KEY): Obtain your key from the Picovoice Console. - The Path to a Model (

--model_path $MODEL_PATH): Download the model file (we recommend thephi-2model with a2.90bit-rate) from the Picovoice Console and provide its absolute path. - Device Specification (

--device $DEVICE): Since we're using a Raspberry Pi with a 4-core CPU, we'll specify this device for optimal performance.

Once you have these details, run the following command to start the demo:

The following video showcases the chat demo in action:

Why Use picoLLM?

Let's review the accuracy metrics for two popular small LLM models in the community, Phi-2

and Gemma, to gain a better understanding. The figure below illustrates the trade-off between model size and accuracy

when using the picoLLM compression method compared to GPTQ. It demonstrates that the picoLLM compression algorithm can

significantly reduce model size while preserving a reasonable level of accuracy relative to the original models.

Regarding Speed, Picovoice provides multiple quantization levels for each model to strike a balance between model

size, accuracy, and speed. Paired with the picoLLM inference engine, developers can select the optimal quantization

level for their specific use case. For example, the following figure demonstrates the relationship between model size

and speed for both Phi-2 and Gemma on a Raspberry Pi 5.

Next Steps

To learn more about the picoLLM inference engine Python SDK and how to integrate it into your projects, refer to the picoLLM Python documentation.