A Language Detection model can identify the language(s) within a given text or spoken utterances. We discuss the latter, i.e. Spoken Language Detection, in this article.

Language Detection and Language Identification are different terms for the same technology.

Language Detection Use Cases

Polyglot Voice Interfaces that can interact in multiple languages are a compelling use case. Think of a vending machine in an international airport that understands many languages. Code-switching for Automatic Speech Transcription (ASR) is another. Call centres have to keep a record of conversations. In a bilingual (e.g. Canada) or multilingual (e.g. Switzerland) geography, Language Detection can automatically detect the conversation's dialect and pass it to the correct speech-to-text engine. Finally, one might be interested in identifying the language to perform Voice Analytics.

Building a Language Detection Model

Data

First, you need data. Lots of multilingual data. Where to get it? Mozilla Common Voice (CV) and Multilingual LibriSpeech (MLS) are great starting points. This step needs careful and diligent handling. CV and MLS are unbalanced (skewed) in language, gender, and age. Depending on the target application, you need to sample the data nonuniformly.

Model

Work backwards from the requirements. Do you need real-time results? The answer will force your choice of model. If real-time processing is a must, RNN and its more sophisticated variations (GRU, LSTM, SRU, etc.) are feasible choices. Otherwise, you can achieve higher accuracy by adapting a BLSTM or a Transformer (Conformer). Keep in mind real-time doesn’t mean zero latency. It means a guaranteed (constant-ish) latency. Hence you might adapt offline models like BLSTM to process in chunks. Latency-controlled BLSTM (LC-BLSTM) is an example of this approach and is popular in streaming speech-to-text.

Loss

Do you need to be able to detect multiple concurrent languages? E.g. in India, it is common to mix Hindi and English. If yes, what is the granularity? Seconds? Milliseconds? Do you need a binary response, or do you need a confidence metric as well? The loss function can be as simple as a softmax trained with cross-entropy, but depending on requirements can get complex fast.

Besides code-switching, using multiple languages concurrently, communication style (acronyms and slang), and input diversity are other major challenges in language detection.

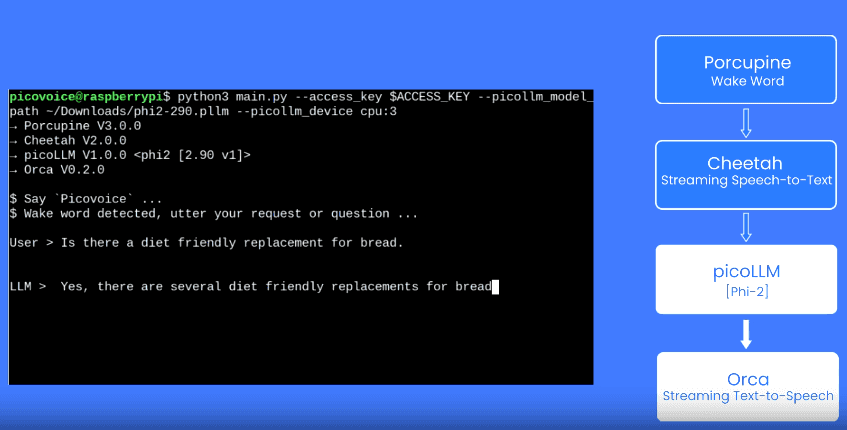

Experts at Picovoice work with Enterprise customers to create private AI algorithms, such as Sound Detection or Speech Emotion Detection, specific to their use cases.

Talk to Sales