Speech recognition is becoming essential in modern applications, whether it's voice-controlled appliances or hands-free voice assistants. Wake word detection, voice command understanding, voice activity detection, and transcription (both real-time and batch) are distinct speech recognition capabilities, each with different engines, trade-offs, and use cases.

This guide breaks down each speech recognition approach, compares the available Python options, and walks through implementation using Picovoice's on-device voice AI stack: covering everything from a single wake word detector to a full voice assistant pipeline.

Cloud vs On-Device Speech Recognition

If you're building for enterprise, embedded, or regulated environments, on-device speech recognition is often the safer architectural choice. In comparison to cloud-based speech recognition, on-device speech recognition offers:

- Compliance & Audio Data Privacy: Audio never leaves the device, simplifying HIPAA, GDPR, and CCPA compliance.

- Speed: Runs with zero network latency to provide faster response times.

- Consistent Performance: Works reliably and consistently in poor connectivity.

Learn more about why and when you should choose voice AI on the edge over the cloud, and facts about on-device speech recognition with cloud quality.

Understanding Types of Speech Recognition Models

Speech recognition can refer to different technologies:

- To detect a specific phrase or enable voice activation: Use Wake Word Detection

- To detect when someone is speaking: Use Voice Activity Detection

- To understand voice commands and extract intent: Use Speech-to-Intent

- To get real-time speech-to-text: Use Streaming Speech-to-Text

- To get batch transcription of audio files: Use Batch Speech-to-Text

Wake Word Detection for Voice Activation

Wake word detection listens continuously for a trigger phrase ("Hey Siri", "Ok Google") and returns a detection event to activate the application. It is widely used in smart devices, automotive systems, kiosks, and industrial equipment to activate voice interactions without continuous recording. On-device wake word detection ensures instant responsiveness while preserving user privacy.

Wake Word Detection Options for Developers: Picovoice Porcupine Wake Word, Snowboy, TensorFlow Lite models

Learn more about voice activation and hotword detection, and see the complete guide to wake word detection for a deeper understanding.

Voice Activity Detection for Recognizing Human Speech

Voice Activity Detection (VAD) determines whether audio contains human speech. It's used to trigger recording, filter silence, and optimize voice processing pipelines by only sending speech segments to downstream engines.

VAD Alternatives for Developers: WebRTC VAD, Silero VAD, Picovoice Cobra Voice Activity Detection

Learn more about how voice activity detection works and see the complete guide to voice activity detection for a deeper understanding.

Understanding Voice Commands with Intent Recognition

Speech-to-Intent recognition is used in industrial control systems, smart appliances, and voice-driven workflows where understanding user intent matters more than generating a raw transcript. By mapping spoken commands directly to structured intents and parameters, applications can execute actions reliably without relying on large language models. Other Spoken Language Understanding (SLU) systems use Automatic Speech Recognition with Natural Language Understanding (NLU) to first get the text output before extracting user intent from it.

Intent Recognition Options for Developers: Amazon Lex, Google Dialogflow, IBM Watson, Microsoft Bot Framework, Picovoice Rhino Speech-to-Intent

Check the differences between conventional and modern approaches to spoken language understanding and learn more about end-to-end intent inference from speech.

Live Transcriptions with Streaming Speech-to-Text

Streaming speech-to-text is commonly used for real-time transcription in voice assistants, meeting summarization tools, and real-time captions to provide text output immediately as the user is speaking. Processing speech on-device enables predictable performance and avoids sending sensitive conversations to external servers.

Streaming Speech-to-Text Options for Developers: Amazon Transcribe Streaming, Google Speech-to-Text Streaming, Azure STT Real-time, Picovoice Cheetah Streaming Speech-to-Text

Batch Speech-to-Text for Audio File Processing

Batch speech-to-text processes a complete audio file and returns the full transcript. It is often used in medical record processing, compliance-driven archiving, and audio analysis where accuracy is critical. Running transcription locally allows organizations to process large audio files without privacy concerns.

Batch Transcription Options for Developers: GCP Speech-to-Text, OpenAI Whisper, Azure Speech, Amazon Transcribe, Picovoice Leopard Speech-to-Text

Python for Speech Recognition: Strengths and Limitations

Python is the most widely used language for AI/ML development. Its rich ecosystem and cross-platform support make it ideal for voice AI prototyping and production.

Advantages of Python for Voice AI

- Cross-platform support:

Pythonapplications can run on macOS, Linux, and Windows with minimal changes, which is useful for developing and testing voice applications across environments. - Pipeline integration:

PythonVoice recognition plugs directly into existing data pipelines, ML workflows, and backend services without language switching. - Prototyping speed: Test a wake word detector or transcription engine in a 10-line script before committing to a production architecture.

- Dependency management: Install several engines with a single

pip install.

Challenges of Python for Voice AI

- The Global Interpreter Lock (GIL):

Python'sGIL can limit true parallel execution in CPU-bound tasks, which may affect performance in multi-stream or concurrent audio processing scenarios. - Performance and Latency:

Pythonis an interpreted language and generally slower than compiled languages like C or Rust. For real-time speech recognition, low-latency wake-word detection, or high-throughput audio streaming, this can become a bottleneck. - Edge hardware support: Running

Pythonon microcontrollers or highly resource-constrained devices can be slower due to memory usage, startup time, and runtime overhead.

The Current State of Python Speech Recognition in 2026

By 2026, Python is one of the dominant orchestration languages for speech recognition systems. The ecosystem offers multiple solutions across different voice AI capabilities: speech-to-text, wake word detection, intent recognition, and voice activity detection.

Wake Word Detection

Commercial Options:

- Picovoice Porcupine - Enterprise solution, robust to noise and ready to deploy in seconds across platforms

- Sensory TrulyHandsfree - Enterprise solution with a long track record

- SoundHound Houndify - Enterprise solution offering two wake word tiers: proof-of-concept (low-cost version, delivered in weeks) and production-grade

Open Source Options:

- openWakeWord - Actively maintained open-source solution with good accuracy; supports custom wake word training; some familiarity with audio or ML workflows needed for best results.

- Snowboy (deprecated) - No longer maintained

- PocketSphinx - Legacy CMU solution

Compare wake word detection accuracy and resource usage in the wake word detection benchmark.

Voice Activity Detection

Commercial Options:

- Picovoice Cobra - Enterprise production-ready, cross-platform VAD combining deep learning accuracy with lightweight on-device performance

Open Source Options:

- Silero VAD - Deep learning-based VAD with good performance; requires PyTorch/ONNX dependency, primarily Python

- Ten VAD - Deep learning-based open-source VAD; newer project with a less mature ecosystem than established solutions

- pyannote.audio - Research-oriented deep learning VAD with strong community; built primarily for speaker diarization

- WebRTC VAD - Lightweight traditional signal-processing/statistical VAD, easy to integrate and well-documented; uses traditional signal processing with lower accuracy in noisy environments

Compare voice activity detection performance benchmarks for Silero, WebRTC, and Cobra.

Intent Recognition

Commercial Options:

- Picovoice Rhino - Enterprise on-device speech-to-intent that maps voice directly to structured intents without intermediate transcription

- Amazon Lex - Enterprise cloud-based conversational AI with built-in STT + NLU pipeline

- Google Dialogflow - Enterprise cloud-based conversational AI with STT + NLU pipeline and multi-channel deployment support

- Microsoft Azure CLU - Enterprise cloud-based NLU for text-based intent classification within the Azure ecosystem

- IBM Watson - Enterprise cloud-based NLU with conversation flow builder and customer service focus

Open Source Options:

- Rasa NLU - Open-source text-to-intent framework; requires training data and infrastructure setup

Compare voice command acceptance rates for Dialogflow, Lex, Watson, and Rhino.

Speech-to-Text

Commercial Options:

- Picovoice Cheetah - Enterprise on-device streaming speech-to-text with low emission latency

- Picovoice Leopard - Enterprise on-device batch speech-to-text optimized for high accuracy, offering advanced speech-to-text features

- Amazon Transcribe - Enterprise cloud-based speech-to-text with streaming and batch modes, integrated with AWS ecosystem

- Google Speech-to-Text - Enterprise cloud-based speech-to-text with wide language coverage and streaming support

- Microsoft Azure Speech - Enterprise cloud-based speech-to-text with real-time and batch modes, custom model training available

- IBM Watson Speech to Text - Enterprise cloud-based speech-to-text with industry-specific model customization

Open Source Options:

- OpenAI Whisper - Open-source on-device STT with multiple model sizes (tiny to large)

- Vosk - Open-source on-device STT with lightweight models for edge deployment

- SpeechRecognition - Open-source Python wrapper for multiple STT backends

Compare batch speech-to-text accuracy and real-time transcription benchmarks for word accuracy, punctuation accuracy, and word emission latency.

Across these categories, the primary difference between solutions is where inference runs and how audio is handled. Systems that run locally process audio frames directly with no network round trips, resulting in lower and more predictable latency while keeping voice data within the host environment. Cloud-based systems require transmitting audio to remote servers, which introduces network latency and dependency on connectivity.

This guide uses Picovoice's on-device platform because it provides a complete voice AI stack (wake word + VAD + STT + intent recognition) in Python with no cloud dependencies, making it ideal for privacy-sensitive and low-latency applications.

Prerequisites

Python3.9+- Microphone access (for real-time processing)

- Picovoice AccessKey (sign up for a free Picovoice Console account)

Choosing the Right Voice AI Solution for Python Apps

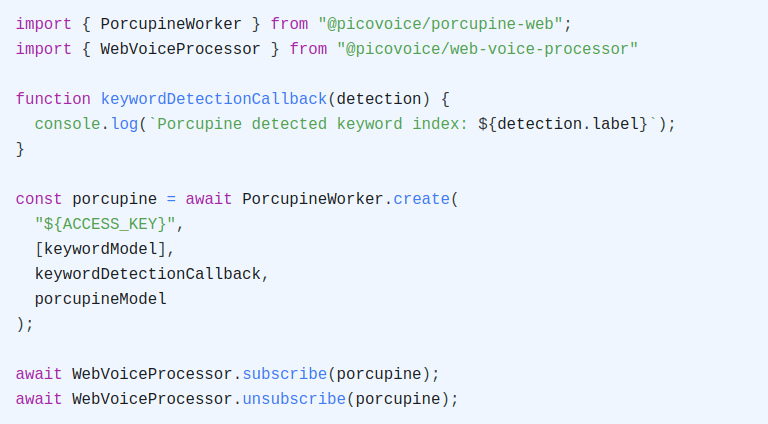

Implement Wake Word Detection for Python Applications

Ideal for: Always-on listening, hands-free activation for voice assistants or voice control pipelines

Use this when: You want your application to remain idle until a specific phrase is spoken. Wake word detection allows the system to listen continuously while minimizing downstream processing by activating only after a predefined trigger phrase (for example, “Hey Siri” or a custom keyword).

- Install the Porcupine Wake Word SDK and PvRecorder using PIP:

Train your custom wake word model using Picovoice Console.

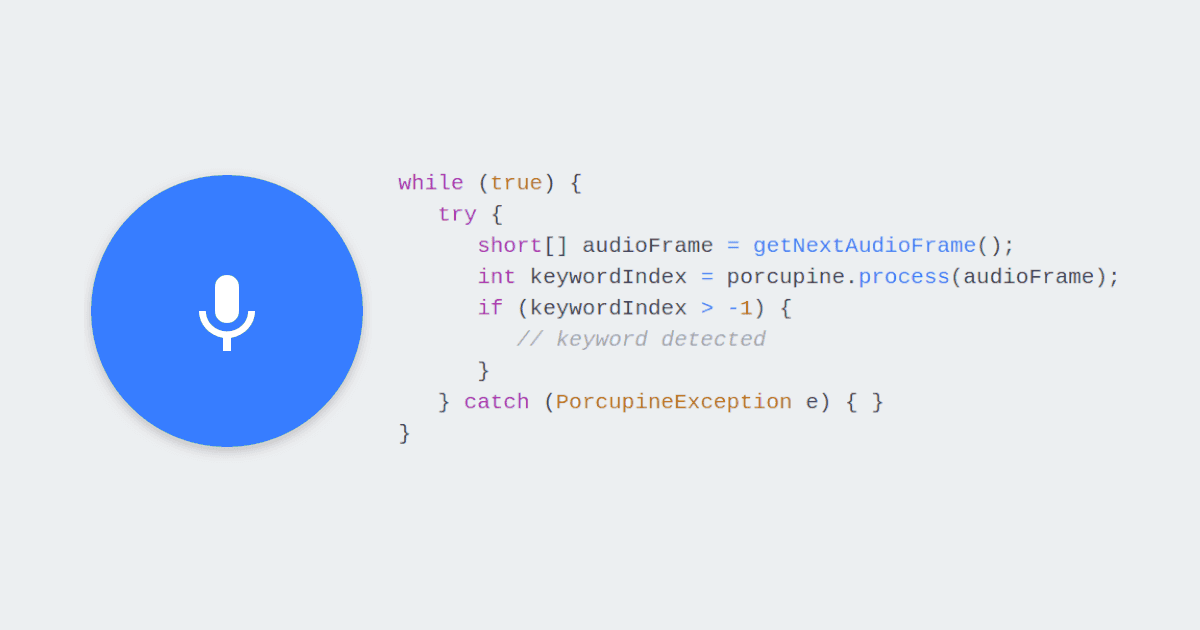

Create an instance of the Wake Word engine:

- Pass in frames of audio to the

.processmethod:

Full code to Add Wake Word Detection:

For more information, check Porcupine Wake Word product page, Python wake word detection guide and refer to Porcupine's Python SDK quick start guide.

Implement Voice Activity Detection for Python Applications

Ideal for: Detecting speech presence, filtering silence from recordings, optimizing voice processing

Use this when: You want to identify when speech is present without performing full transcription. Voice activity detection helps reduce unnecessary computation by forwarding only speech segments to downstream processing or triggering recording dynamically.

- Install the Cobra Voice Activity Detection SDK and PvRecorder using PIP:

- Create an instance of the Voice Activity Detection engine:

- Pass in frames of audio to the

.processmethod:

Full code to Add Voice Activity Detection:

For more information check Cobra Voice Activity Detection's product page and refer to Cobra's Python SDK quick start guide.

Implementing Intent Recognition for Python Applications

Ideal for: Understanding voice commands, executing actions from spoken instructions in apps and devices

Use this when: Your application needs to understand what a user wants to do, not just what they said. Speech-to-intent processing maps spoken phrases directly to intents and parameters, making it well suited for short, structured commands like controlling devices or navigating application flows.

- Install the Rhino Speech-to-Intent SDK and PvRecorder using PIP:

Train your Context using Picovoice Console.

Create an instance of Rhino Speech-to-Intent to start recognizing voice commands within the domain of the provided context:

- Pass in frames of audio to the

.processfunction and use the.get_inferencefunction to determine the user's intent:

Full code to Add Intent Recognition:

For more information check Rhino Speech-to-Intent's product page, Rhino syntax cheat sheet and refer to Rhino's Python SDK quick start guide.

Implementing Real-time Transcription for Python Applications

Ideal for: live captions, real-time speech input for voice assistants and AI agents

Use this when: You need transcription results while the user is still speaking. Streaming speech-to-text enables low-latency transcription for conversational interfaces, live note-taking, and applications that react immediately to spoken input.

- Install the Cheetah Streaming Speech-to-Text SDK and PvRecorder using PIP:

- Create an instance of Cheetah to transcribe speech to text in real-time:

- Pass in audio frames as they become available to the

.processfunction:

Full code to Add Real-time Transcription:

For more information check Cheetah Streaming Speech-to-Text's product page and refer to Cheetah's Python SDK quick start guide.

Implementing Batch Transcription for Python Applications

Ideal for: Audio file transcription, processing recorded content like meeting archives and podcasts

Use this when: You are processing pre-recorded audio rather than live speech. Batch transcription is optimized for accuracy and completeness, making it suitable for longer recordings.

- Install the Leopard Speech-to-Text SDK using PIP:

- Create an instance of Leopard to transcribe speech to text:

- Pass in an audio file to Leopard and inspect the result:

Full code to Add Batch Transcription:

For more information, check Leopard Speech-to-Text's product page and refer to Leopard's Python SDK quick start guide.

Building a Complete Voice Assistant

This example demonstrates how multiple engines work together in a complete voice pipeline:

Example command: "Hey assistant, write note, dentist appointment at 6PM tomorrow"

- Porcupine Wake Word detects "Hey assistant"

- Rhino Speech-to-Intent interprets the command "write note"

- Cheetah Streaming Speech-to-Text transcribes "dentist appointment at 6PM tomorrow"

With all components in place, the full flow looks like this:

- Porcupine Wake Word Detection listens for custom wake words, such as "Hey Siri" or "Hey Pico"

- Cobra Voice Activity Detection identifies when speech is present and filters silence

- Rhino Speech-to-Intent interprets the commands, such as "write note"

- Cheetah Streaming Speech-to-Text transcribes utterances in real time, such as "dentist appointment at 6PM tomorrow"

The app executes the recognized action or displays text. This stack runs fully on-device and requires no cloud connection, making it ideal for privacy sensitive and low latency applications.

Preparing a Python Voice Application for Production Deployment

Before deploying a speech-enabled Python application, it is important to validate performance, reliability, and user trust across real-world conditions. Review this checklist before releasing a voice-enabled Python application:

Shared Best Practices Across Platforms

- Always call

.delete()when done with engines - Test memory usage over extended sessions

- Implement graceful error handling for microphone access

- Document audio handling in your privacy policy

Desktop Environments (E.g., Linux, macOS, Windows)

- Handle OS-level microphone permissions

- Test with different audio input devices

- Package all required dependencies before deployment

Embedded (E.g., Raspberry Pi, Arduino)

- Monitor CPU and memory on constrained hardware

- Configure systemd services for always-on listening

- Test power consumption during typical sessions

- Verify performance with USB vs. onboard microphones

Server / Backend Pipelines

- Implement queue-based processing for concurrent requests

- Monitor disk I/O when processing large audio archives

Diagnosing Common Speech Recognition Issues in Python Apps

Speech recognition quality is often influenced by integration choices rather than model limitations. For complex deployments, Picovoice Professional Services can help with system design and optimization for Python applications.

Microphone Access Failures

- Confirm microphone permissions at the operating system level

- On Linux, verify the user belongs to the appropriate audio group

- On macOS, check System Settings > Privacy & Security > Microphone

Accuracy Degradation

- Test in quieter conditions to isolate environmental noise during testing

- Add custom vocabulary or keyword boosting where relevant

High CPU or Memory Usage

- Don't use speech-to-text or cloud-based systems for wake word detection

- Use Rhino for voice commands rather than the STT+NLU combination when possible

- Stop engines when not needed

Common Python Voice AI Applications

Python-based, on-device voice AI is commonly used to build:

- Voice assistants, agents, and copilots

- Medical and healthcare applications

- Industrial and field-deployed IoT systems

- Accessibility and assistive technologies

- Transcription and summarization pipelines

- Raspberry Pi and other edge-focused projects

Resources

Documentation

- Porcupine Wake Word Python Quick Start

- Porcupine Wake Word Python API Documentation

- Rhino Speech-to-Intent Python Quick Start

- Rhino Speech-to-Intent Python API Documentation

- Cheetah Streaming Speech-to-Text Python Quick Start

- Cheetah Streaming Speech-to-Text API Documentation

- Leopard Speech-to-Text Python Quick Start

- Leopard Speech-to-Text API Documentation

- Cobra Voice Activity Detection Python Quick Start

- Cobra Voice Activity Detection API Documentation

- PvRecorder Python Quick Start

- PvRecorder API Documentation

Tutorials

- Python Wake Word Detection Guide

- Python Batch Speech-to-Text Tutorial

- Python Real-time Speech-to-Text Tutorial

- Python Voice Activity Detection

- Automatic Punctuation and Capitalization in Python Speech-to-Text

- Word Confidence in Python Speech-to-Text

Demos

- Official Python Wake Word Demo

- Official Python Speech-to-Intent Demo

- Official Python Streaming Speech-to-Text Demo

- Official Python Speech-to-Text Demo

- Official Python Voice Activity Detection Demo

- Official PvRecorder Demo

Conclusion and Key Takeaways

Speech recognition enables Python applications to move beyond traditional input methods and support natural, voice-driven interactions. In practice, however, developers often encounter trade-offs, including fragmented open-source tooling, dependence on cloud-based APIs, and runtime constraints that complicate real-time audio processing.

An on-device approach mitigates many of these challenges. With Picovoice's official Python SDKs, developers can assemble a complete local voice AI stack—wake word detection, voice activity detection, speech-to-intent, real-time and batch transcription. This makes it possible to build voice interfaces that are responsive, privacy-preserving, and deployable across desktops, servers, and embedded.

Key takeaways:

- Different voice AI capabilities require different engines e.g., don't use speech-to-text for voice commands

- Python's cross-platform support enables voice AI development on desktop with seamless deployment to Raspberry Pi, servers, and embedded

- On-device speech recognition eliminates network latency, ensures privacy compliance, and works reliably in poor connectivity

- Custom models for wake words and voice commands can be trained in minutes without machine learning expertise

To get started, create a free Picovoice account and begin building voice-enabled Python applications.